离散傅立叶变换

理论分析

行最小基频为 列最小基频为

变换求和次序即等价两次一维傅立叶变换

编程实现

- 基础库

import numpy as np

- 源代码

# 离散傅立叶变换, (744 µs ± 3.82 µs per loop)

def dft2d_dotloop(inputs):

m, n = inputs.shape

result = np.zeros((m, n), dtype=np.complex64)

for u in range(m):

for v in range(n):

for x in range(m):

for y in range(n):

result[u, v] += inputs[x, y] * np.exp(-2j * np.pi * (((x * u) / m) + ((y * v) / n)))

return result

# 离散傅立叶反变换

def idft2d_dotloop(inputs):

m, n = inputs.shape

result = np.zeros((m, n), dtype=np.complex64)

for x in range(m):

for y in range(n):

for u in range(m):

for v in range(n):

result[x, y] += inputs[u, v] * np.exp(2j * np.pi * (((u * x) / m) + ((v * y) / n)))

result /= (m*n)

return result

a = np.array([[1, 1, 3, 5], [1, 1, 1, 1], [1, 1, 2, 1], [1, 1, 1, 1]])

b = np.random.randn(4, 4)

print(a,

dft2d_dotloop(a),

np.fft.fft2(a),

idft2d_dotloop(dft2d_dotloop(a)),

dft2d_dotloop(a)-np.fft.fft2(a),

idft2d_dotloop(dft2d_dotloop(a))-a, sep="\n\n")

print(b,

dft2d_dotloop(b),

np.fft.fft2(b),

idft2d_dotloop(dft2d_dotloop(b)),

dft2d_dotloop(b)-np.fft.fft2(b),

idft2d_dotloop(dft2d_dotloop(b))-b, sep="\n\n")

# 离散傅立叶变换向量化, (70.1 µs ± 214 ns per loop)

def dft2d_vectorloop(inputs):

m, n = inputs.shape

temp = np.zeros((m, n), dtype=np.complex64)

result = np.zeros((m, n), dtype=np.complex64)

for v in range(n):

for y in range(n):

temp[:, v] += inputs[:, y] * np.exp(-2j * np.pi * ((y * v) / n))

for u in range(m):

for x in range(m):

result[u, :] += temp[x, :] * np.exp(-2j * np.pi * ((x * u) / m))

return result

# 离散傅立叶反变换向量化

def idft2d_vectorloop(inputs):

m, n = inputs.shape

temp = np.zeros((m, n), dtype=np.complex64)

result = np.zeros((m, n), dtype=np.complex64)

for y in range(n):

for v in range(n):

temp[:, y] += inputs[:, v] * np.exp(2j * np.pi * ((v * y) / n))

temp /= n

for x in range(m):

for u in range(m):

result[x, :] += temp[u, :] * np.exp(2j * np.pi * ((u * x) / m))

result /= m

return result

a = np.array([[1, 1, 3, 5], [1, 1, 1, 1], [1, 1, 2, 1], [1, 1, 1, 1]])

b = np.random.randn(3, 3)

print(a,

dft2d_vectorloop(a),

np.fft.fft2(a),

idft2d_vectorloop(dft2d_vectorloop(a)),

dft2d_vectorloop(a)-np.fft.fft2(a),

idft2d_vectorloop(dft2d_vectorloop(a))-a, sep="\n\n")

print(b,

dft2d_vectorloop(b),

np.fft.fft2(b),

idft2d_vectorloop(dft2d_vectorloop(b)),

dft2d_vectorloop(b)-np.fft.fft2(b),

idft2d_vectorloop(dft2d_vectorloop(b))-b, sep="\n\n")

"""

Output:

[[1 1 3 5]

[1 1 1 1]

[1 1 2 1]

[1 1 1 1]]

[[23.+0.0000000e+00j -3.+4.0000000e+00j -1.-1.7145056e-15j

-3.-4.0000000e+00j]

[ 5.+0.0000000e+00j -1.+4.0000000e+00j -3.+0.0000000e+00j

-1.-4.0000000e+00j]

[ 7.-7.3478811e-16j -3.+4.0000000e+00j -1.-4.8985874e-16j

-3.-4.0000000e+00j]

[ 5.+0.0000000e+00j -1.+4.0000000e+00j -3.+0.0000000e+00j

-1.-4.0000000e+00j]]

[[23.+0.j -3.+4.j -1.+0.j -3.-4.j]

[ 5.+0.j -1.+4.j -3.+0.j -1.-4.j]

[ 7.+0.j -3.+4.j -1.+0.j -3.-4.j]

[ 5.+0.j -1.+4.j -3.+0.j -1.-4.j]]

[[1.+0.0000000e+00j 1.+4.1633363e-17j 3.-2.7755576e-17j 5.-1.3877788e-16j]

[1.+4.1633363e-17j 1.-2.7755576e-17j 1.-1.3877788e-16j 1.+5.5511151e-17j]

[1.-2.7755576e-17j 1.-1.3877788e-16j 2.+5.5511151e-17j 1.+6.8001160e-16j]

[1.-1.3877788e-16j 1.+5.5511151e-17j 1.+6.8001160e-16j 1.-5.5511151e-17j]]

[[0.+0.00000000e+00j 0.+0.00000000e+00j 0.-1.71450565e-15j

...

[ 9.76770049e-08-3.9736431e-08j 6.67392704e-08+0.0000000e+00j

1.61892952e-07+0.0000000e+00j]

[-6.90229418e-08+3.9736431e-08j -2.05740107e-07+0.0000000e+00j

-2.62418983e-07+0.0000000e+00j]]

"""

- 小结

实现的离散二维傅立叶变换与调用 NumPy 的 fft2 与 ifft2 的 API 结果吻合,通过交换求和次序利用 NumPy 的广播机制矢量化运算 for-loop 速度提高了近十倍

快速傅立叶变换

理论分析

快速傅立叶变换优化了离散傅立叶变换计算复杂度, 因为频域中心共轭对称,所以可分治问题为规模更小的离散傅立叶变换(可不断将原问题折半即蝴蝶变换)

- 一维傅立叶变换有正交基,

是基中的一组向量

- 基频

令

且

编程实现

- 基础库

import numpy as np

- 源代码

# 快速傅立叶变换, (137 µs ± 911 ns per loop)

def fft2d_divideloop(inputs):

m, n = inputs.shape

# fft 需要保证序列是 2^n, 否则补零

if 2**np.ceil(np.log2(m)) != m:

inputs = np.pad(inputs, ((0, int(2**np.ceil(np.log2(m))-m)), (0, 0)), 'constant', constant_values=(0, 0))

if 2**np.ceil(np.log2(n)) != n:

inputs = np.pad(inputs, ((0, 0), (0, int(2**np.ceil(np.log2(n))-n))), 'constant', constant_values=(0, 0))

m, n = inputs.shape

result = np.zeros((m, n), dtype=np.complex64)

for v in range(n):

result[:, v] = fft1d_divideloop(inputs[:, v])

for u in range(m):

result[u, :] = fft1d_divideloop(result[u, :])

return result

# 快速傅立叶反变换

def ifft2d_divideloop(inputs):

m, n = inputs.shape

if 2**np.ceil(np.log2(m)) != m:

inputs = np.pad(inputs, ((0, int(2**np.ceil(np.log2(m))-m)), (0, 0)), 'constant', constant_values=(0, 0))

if 2**np.ceil(np.log2(n)) != n:

inputs = np.pad(inputs, ((0, 0), (0, int(2**np.ceil(np.log2(n))-n))), 'constant', constant_values=(0, 0))

m, n = inputs.shape

result = np.zeros((m, n), dtype=np.complex64)

for y in range(n):

result[:, y] = ifft1d_divideloop(inputs[:, y])

for x in range(m):

result[x, :] = ifft1d_divideloop(result[x, :])

return result

def fft1d_divideloop(inputs):

l = len(inputs)

ll = int(np.log2(l))

# 通过二进制编码重排数据两两组成一对计算元

vector = np.array(reset_data(inputs), dtype=np.complex128)

for i in range(ll):

m = 2**(i+1)

ym = np.exp(-1.j * 2 * np.pi / m)

for j in range(0, l, m):

y = 1

for k in range(m//2):

c0 = vector[k+j]

c1 = vector[k+j+m//2] * y

vector[k+j] = c0 + c1

vector[k+j+m//2] = c0 - c1

y *= ym

return vector

def ifft1d_divideloop(inputs):

l = len(inputs)

ll = int(np.log2(l))

vector = np.array(reset_data(inputs), dtype=np.complex128)

for i in range(ll):

m = 2**(i+1)

ym = np.exp(1.j * 2 * np.pi / m)

for j in range(0, l, m):

y = 1

for k in range(m//2):

c0 = vector[k+j]

c1 = vector[k+j+m//2] * y

vector[k+j] = c0 + c1

vector[k+j+m//2] = c0 - c1

y *= ym

vector /= l

return vector

def reset_data(inputs):

l = len(inputs)

outputs = np.zeros(l, dtype=np.complex128)

for i in range(l):

j = int(reverse_bin(i, l), 2)

outputs[j] = inputs[i]

return outputs

def reverse_bin(numbers, length):

bin_str = bin(numbers)[2:]

if len(bin_str) <= np.log2(length):

bin_str = ("0"*(int(np.log2(length)-len(bin_str))) + bin_str)[::-1]

return bin_str

a = np.array([[1, 1, 3, 5], [1, 1, 1, 1], [1, 1, 2, 1], [1, 1, 1, 1]])

b = np.random.randn(3, 3)

print(a,

fft2d_divideloop(a),

np.fft.fft2(a),

ifft2d_divideloop(fft2d_divideloop(a)),

fft2d_divideloop(a)-np.fft.fft2(a),

ifft2d_divideloop(fft2d_divideloop(a))-a, sep="\n\n")

print(b,

fft2d_divideloop(b),

np.fft.fft2(b),

ifft2d_divideloop(fft2d_divideloop(b))[:-1,:-1],

fft2d_divideloop(b)[:-1,:-1]-np.fft.fft2(b),

ifft2d_divideloop(fft2d_divideloop(b))[:-1,:-1]-b, sep="\n\n")

"""

Output:

[[1 1 3 5]

[1 1 1 1]

[1 1 2 1]

[1 1 1 1]]

[[23.+0.j -3.+4.j -1.+0.j -3.-4.j]

[ 5.+0.j -1.+4.j -3.+0.j -1.-4.j]

[ 7.+0.j -3.+4.j -1.+0.j -3.-4.j]

[ 5.+0.j -1.+4.j -3.+0.j -1.-4.j]]

[[23.+0.j -3.+4.j -1.+0.j -3.-4.j]

[ 5.+0.j -1.+4.j -3.+0.j -1.-4.j]

[ 7.+0.j -3.+4.j -1.+0.j -3.-4.j]

[ 5.+0.j -1.+4.j -3.+0.j -1.-4.j]]

[[1.+0.0000000e+00j 1.+1.2246469e-16j 3.+0.0000000e+00j 5.-1.2246469e-16j]

[1.+0.0000000e+00j 1.+0.0000000e+00j 1.+0.0000000e+00j 1.+0.0000000e+00j]

[1.+0.0000000e+00j 1.+0.0000000e+00j 2.+0.0000000e+00j 1.+0.0000000e+00j]

[1.+0.0000000e+00j 1.+0.0000000e+00j 1.+0.0000000e+00j 1.+0.0000000e+00j]]

[[0.+0.j 0.+0.j 0.+0.j 0.+0.j]

[0.+0.j 0.+0.j 0.+0.j 0.+0.j]

[0.+0.j 0.+0.j 0.+0.j 0.+0.j]

[0.+0.j 0.+0.j 0.+0.j 0.+0.j]]

...

[-2.97851090e-08+2.22742265e-19j 1.43793734e-08+4.58425894e-18j

6.15280351e-08+2.22742265e-19j]

[ 1.02884864e-07+0.00000000e+00j 2.79559491e-08-2.77034351e-17j

-3.54333531e-08+0.00000000e+00j]]

"""

- 小结

实现的二维快速傅立叶变换与调用 NumPy 的 fft2 与 ifft2 的 API 结果吻合,需要注意,进行快速傅立叶变换时必须保证数据的尺寸是 ,对于不满足的情况可以先补零再变换(频域会有所差别)最后反变换后切取原数据位置结果

卷积定理

空域的卷积的傅立叶变换等于傅里叶变换后频域的乘积

空域乘积的傅里叶变换等于空域傅里叶变换后频域的乘积

傅立叶变换是线性的,卷积核可视为冲激函数的线性组合,卷积核的尺寸即冲激函数的定义域,则可利用平移性质与缩放性质证明

矩阵测试

- 调用的库

import torch

import random

from scipy.signal import convolve

- 构造数据

# 实数

a = torch.arange(0,9,1).reshape(3,3).type(torch.float32)

b = torch.arange(1,10,1).reshape(3,3).type(torch.float32)

# 补零

pad_size = 100

a_pad = torch.nn.functional.pad(a, [pad_size]*4, value=0)

b_pad = torch.nn.functional.pad(b, [pad_size]*4, value=0)

print(f"a:\n{a}\na {a.shape}", f"b:\n{b}\nb {b.shape}",

f"a_pad_centre:\n{a_pad[pad_size:pad_size+3, pad_size:pad_size+3]}\na_pad {a_pad.shape}",

f"b_pad_centre:\n{b_pad[pad_size:pad_size+3, pad_size:pad_size+3]}\nb_pad {b_pad.shape}", sep="\n\n")

"""

Output:

a:

tensor([[0., 1., 2.],

[3., 4., 5.],

[6., 7., 8.]])

a torch.Size([3, 3])

b:

tensor([[1., 2., 3.],

[4., 5., 6.],

[7., 8., 9.]])

b torch.Size([3, 3])

a_pad_centre:

tensor([[0., 1., 2.],

[3., 4., 5.],

[6., 7., 8.]])

a_pad torch.Size([203, 203])

b_pad_centre:

tensor([[1., 2., 3.],

[4., 5., 6.],

[7., 8., 9.]])

b_pad torch.Size([203, 203])

"""

- 测试

API 的特性

result = convolve(a, b, 'same')

result_ab = torch.conv2d(a.unsqueeze(0).unsqueeze(0), b.unsqueeze(0).unsqueeze(0), padding=1).squeeze(0).squeeze(0)

# c是b的中心对称(反转核)

c = torch.arange(9,0,-1).reshape(3,3).type(torch.float32)

result_ac = torch.conv2d(a.unsqueeze(0).unsqueeze(0), c.unsqueeze(0).unsqueeze(0), padding=1).squeeze(0).squeeze(0)

print(f"signal-convolve(ab):\n{result}\nis real convolution operation",

f"pytorch-conv2d(ab):\n{result_ab}\nis noly correlation operation",

f"c:\n{c}\nc {c.shape}",

f"pytorch-conv2d(ac):\n{result_ac}\ncorrelation equal real convolution after inverter kernel", sep="\n\n")

"""

Output:

signal-convolve(ab):

[[ 14. 35. 38.]

[ 57. 120. 111.]

[110. 197. 158.]]

is real convolution operation

pytorch-conv2d(ab):

tensor([[ 66., 115., 82.],

[153., 240., 159.],

[ 90., 133., 82.]])

is noly correlation operation

c:

tensor([[9., 8., 7.],

[6., 5., 4.],

[3., 2., 1.]])

c torch.Size([3, 3])

pytorch-conv2d(ac):

tensor([[ 14., 35., 38.],

[ 57., 120., 111.],

[110., 197., 158.]])

correlation equal real convolution after inverter kernel

"""

可知卷积神经网络中的 操作实际上是相关运算(不支持复数运算)

- 测试

与

API

a_k1 = torch.fft.fft2(a)

b_k1 = torch.fft.fft2(b)

a_k2 = torch.fft.fftshift(torch.fft.fft2(a))

b_k2 = torch.fft.fftshift(torch.fft.fft2(b))

a_k3 = torch.fft.fft2(torch.fft.fftshift(a))

b_k3 = torch.fft.fft2(torch.fft.fftshift(b))

print(f"1.a-fft-ifft:\n{torch.fft.ifft2(a_k1)}\nis right reversible operation",

f"2.a-fft-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(a_k1))}\nneed use fftshift and ifftshift operation in pairs",

f"3.a-fft-ifftshift-ifft:\namplitude\n{torch.fft.ifft2(torch.fft.ifftshift(a_k1)).abs()}\nphase\n{torch.fft.ifft2(torch.fft.ifftshift(a_k1)).angle()}\namplitude is right but phase is wrong",

f"4.a-fft-fftshift-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(a_k2))}\nis wrong operation",

f"5.a-fft-fftshift-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(a_k2))}\nis right reversible operation",

f"6.a-fft-fftshift-ifft:\namplitude\n{torch.fft.ifft2(a_k2).abs()}\nphase\n{torch.fft.ifft2(a_k2).angle()}\namplitude is right but phase is wrong",

f"7.a-fftshift-fft-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(a_k3))}\nis wrong operation",

f"8.a-fftshift-fft-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(a_k3))}\nis wrong operation",

f"9.a-fftshift-fft-ifft:\n{torch.fft.ifft2(a_k3)}\nis wrong operation", sep="\n\n")

d = torch.rand((3,3), dtype=torch.complex128)

d_pad = torch.nn.functional.pad(d, [1]*4, value=0)

d_pad_k1 = torch.fft.fft2(d_pad)

print(f"\n10.complex d\n{d_pad}\nfft-ifft:\n", torch.fft.ifft2(d_pad_k1))

a_k11 = a_k1.reshape(-1)

index = [i for i in range(0,9,1)]

random.shuffle(index)

a_k12 = torch.empty_like(a_k11)

for i, j in enumerate(index):

a_k12[j] = a_k11[i]

a_k13 = a_k12.reshape(3,3)

print(f"\n11.fft-shuffe k-space-ifft:\n", torch.fft.ifft2(a_k13))

"""

Output:

1.a-fft-ifft:

tensor([[0.+0.j, 1.+0.j, 2.+0.j],

[3.+0.j, 4.+0.j, 5.+0.j],

[6.+0.j, 7.+0.j, 8.+0.j]])

is right reversible operation

2.a-fft-ifft-ifftshift:

tensor([[4.+0.j, 5.+0.j, 3.+0.j],

[7.+0.j, 8.+0.j, 6.+0.j],

[1.+0.j, 2.+0.j, 0.+0.j]])

need use fftshift and ifftshift operation in pairs

3.a-fft-ifftshift-ifft:

amplitude

tensor([[0.0000, 1.0000, 2.0000],

[3.0000, 4.0000, 5.0000],

[6.0000, 7.0000, 8.0000]])

phase

tensor([[ 0.0000e+00, -2.0944e+00, 2.0944e+00],

[-2.0944e+00, 2.0944e+00, 0.0000e+00],

[ 2.0944e+00, -3.4060e-08, -2.0944e+00]])

amplitude is right but phase is wrong

4.a-fft-fftshift-ifft-ifftshift:

tensor([[-2.0000-3.4641e+00j, 5.0000+0.0000e+00j, -1.5000+2.5981e+00j],

[ 7.0000+2.3842e-07j, -4.0000+6.9282e+00j, -3.0000-5.1962e+00j],

[-0.5000+8.6603e-01j, -1.0000-1.7321e+00j, 0.0000+0.0000e+00j]])

is wrong operation

5.a-fft-fftshift-ifftshift-ifft:

tensor([[0.+0.j, 1.+0.j, 2.+0.j],

[3.+0.j, 4.+0.j, 5.+0.j],

[6.+0.j, 7.+0.j, 8.+0.j]])

is right reversible operation

6.a-fft-fftshift-ifft:

amplitude

tensor([[0.0000, 1.0000, 2.0000],

[3.0000, 4.0000, 5.0000],

[6.0000, 7.0000, 8.0000]])

phase

tensor([[ 0.0000e+00, 2.0944e+00, -2.0944e+00],

[ 2.0944e+00, -2.0944e+00, 0.0000e+00],

[-2.0944e+00, 3.4060e-08, 2.0944e+00]])

amplitude is right but phase is wrong

7.a-fftshift-fft-ifft-ifftshift:

tensor([[2.9802e-08+0.j, 1.0000e+00+0.j, 2.0000e+00+0.j],

[3.0000e+00+0.j, 4.0000e+00+0.j, 5.0000e+00+0.j],

[6.0000e+00+0.j, 7.0000e+00+0.j, 8.0000e+00+0.j]])

is wrong operation

8.a-fftshift-fft-ifftshift-ifft:

tensor([[ 8.0000e+00+0.0000e+00j, -3.0000e+00-5.1962e+00j,

-3.5000e+00+6.0622e+00j],

[-1.0000e+00-1.7321e+00j, 8.9407e-08-5.9605e-08j,

1.0000e+00-5.9605e-08j],

[-2.5000e+00+4.3301e+00j, 3.0000e+00+0.0000e+00j,

-2.0000e+00-3.4641e+00j]])

is wrong operation

9.a-fftshift-fft-ifft:

tensor([[8.0000e+00+0.j, 6.0000e+00+0.j, 7.0000e+00+0.j],

[2.0000e+00+0.j, 2.9802e-08+0.j, 1.0000e+00+0.j],

[5.0000e+00+0.j, 3.0000e+00+0.j, 4.0000e+00+0.j]])

is wrong operation

10.complex d

tensor([[0.0000+0.0000j, 0.0000+0.0000j, 0.0000+0.0000j, 0.0000+0.0000j,

0.0000+0.0000j],

[0.0000+0.0000j, 0.3199+0.5881j, 0.4417+0.5690j, 0.2725+0.1384j,

0.0000+0.0000j],

[0.0000+0.0000j, 0.3823+0.3599j, 0.1309+0.5448j, 0.7290+0.3217j,

0.0000+0.0000j],

[0.0000+0.0000j, 0.9242+0.5364j, 0.5341+0.2874j, 0.0283+0.2953j,

0.0000+0.0000j],

[0.0000+0.0000j, 0.0000+0.0000j, 0.0000+0.0000j, 0.0000+0.0000j,

0.0000+0.0000j]], dtype=torch.complex128)

fft-ifft:

tensor([[-4.4409e-18+6.6613e-18j, -1.5949e-17-1.3648e-17j,

-6.6582e-17+9.8469e-18j, 3.1702e-17-4.2830e-17j,

1.0860e-17-4.4394e-18j],

[-1.0408e-17-2.7756e-17j, 3.1988e-01+5.8810e-01j,

4.4173e-01+5.6904e-01j, 2.7248e-01+1.3844e-01j,

0.0000e+00-5.5511e-17j],

[ 2.0817e-17-8.3267e-17j, 3.8231e-01+3.5993e-01j,

1.3086e-01+5.4476e-01j, 7.2897e-01+3.2170e-01j,

-5.5511e-17-8.3267e-17j],

[ 0.0000e+00-8.3267e-17j, 9.2417e-01+5.3639e-01j,

5.3412e-01+2.8744e-01j, 2.8261e-02+2.9532e-01j,

0.0000e+00+0.0000e+00j],

[ 3.3307e-17-3.6637e-17j, -1.3106e-17+7.6299e-18j,

-5.2554e-18-1.2412e-17j, -3.9503e-18-3.2297e-17j,

-1.0995e-17-1.5101e-17j]], dtype=torch.complex128)

11.fft-shuffe k-space-ifft:

tensor([[ 0.0000+5.9605e-08j, -2.0000+3.4641e+00j, -2.5000-6.0622e+00j],

[-3.5000+8.6603e-01j, -5.5000-2.5981e+00j, 4.5000-8.6603e-01j],

[-2.5000-4.3301e+00j, 3.0000-1.7321e+00j, -5.0000+3.4641e+00j]])

"""

第一,可知 与

是一组可逆运算(操作的定义域为实数与复数域)通常 shift 使得低频在中心高频在四周便于分析频谱图,可通过傅立叶变换平移定理先把原始图像做变换再做

或先做

后再根据频域图像的共轭对称性变换,

与

是后者

第二,可知在实数 运算后的频域只进行

或

运算再

运算虽然最终不改变实数的值,但是由于

的算法逻辑必须运用共轭复数因此

元素顺序不匹配将导致存在错误相位,若是任意打乱元素顺序则完全错误

- 验证

API 的卷积定理

result_k1 = a_k1 * b_k1

result_k2 = a_k2 * b_k2

result_k3 = a_k3 * b_k3

a_pad_k1 = torch.fft.fft2(a_pad)

b_pad_k1 = torch.fft.fft2(b_pad)

a_pad_k2 = torch.fft.fftshift(torch.fft.fft2(a_pad))

b_pad_k2 = torch.fft.fftshift(torch.fft.fft2(b_pad))

a_pad_k3 = torch.fft.fft2(torch.fft.fftshift(a_pad))

b_pad_k3 = torch.fft.fft2(torch.fft.fftshift(b_pad))

result_pad_k1 = a_pad_k1 * b_pad_k1

result_pad_k2 = a_pad_k2 * b_pad_k2

result_pad_k3 = a_pad_k3 * b_pad_k3

print(

f"1.result-fft-(ab)-ifft:\n{torch.fft.ifft2(result_k1)}",

f"2.result-fft-(ab)-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(result_k1))}",

f"3.result-fft-(ab)-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(result_k1))}",

f"4.result-fft-fftshift-(ab)-ifft:\n{torch.fft.ifft2(result_k2)}",

f"5.result-fft-fftshift-(ab)-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(result_k2))}",

f"6.result-fft-fftshift-(ab)-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(result_k2))}",

f"7.result-fftshift-fft-(ab)-ifft:\n{torch.fft.ifft2(result_k3)}",

f"8.result-fftshift-fft-(ab)-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(result_k3))}",

f"9.result-fftshift-fft-(ab)-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(result_k3))}",

"all is wrong be due to different from the actual convolution operation need pad zero", sep="\n"

)

print(

"对空域原始图像进行补零",

f"1.result_pad-fft-(ab)-ifft:\n{torch.fft.ifft2(result_pad_k1)[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"2.result_pad-fft-(ab)-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(result_pad_k1))[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"3.result_pad-fft-(ab)-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(result_pad_k1))[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"4.result_pad-fft-fftshift-(ab)-ifft:\n{torch.fft.ifft2(result_pad_k2)[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"5.result_pad-fft-fftshift-(ab)-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(result_pad_k2))[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"6.result_pad-fft-fftshift-(ab)-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(result_pad_k2))[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"7.result_pad-fftshift-fft-(ab)-ifft:\n{torch.fft.ifft2(result_pad_k3)[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"8.result_pad-fftshift-fft-(ab)-ifftshift-ifft:\n{torch.fft.ifft2(torch.fft.ifftshift(result_pad_k3))[pad_size:pad_size+3, pad_size:pad_size+3]}",

f"9.result_pad-fftshift-fft-(ab)-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(result_pad_k3))[pad_size:pad_size+3, pad_size:pad_size+3]}",

"only 3 is right operation and 6 amplitude is right but phase is wrong", sep="\n"

)

e = torch.rand((3,3), dtype=torch.complex128)

e_pad = torch.nn.functional.pad(e, [1]*4, value=0)

result_1 = convolve(d, e, 'same')

e_pad_k1 = torch.fft.fft2(e_pad)

result_pad_k4 = e_pad_k1 * d_pad_k1

print(f"complex de conv:\n{result_1}", f"result-fft-(de)-ifft-ifftshift:\n{torch.fft.ifftshift(torch.fft.ifft2(result_pad_k4))[1:4,1:4]}", sep="\n\n")

"""

Output:

1.result-fft-(ab)-ifft:

tensor([[210.+0.j, 210.+0.j, 201.+0.j],

[210.+0.j, 210.+0.j, 201.+0.j],

[129.+0.j, 129.+0.j, 120.+0.j]])

2.result-fft-(ab)-ifftshift-ifft:

tensor([[ 210.0000+0.0000e+00j, -105.0000-1.8187e+02j, -100.5000+1.7407e+02j],

[-105.0000-1.8187e+02j, -105.0000+1.8187e+02j, 201.0000+7.6294e-06j],

[ -64.5000+1.1172e+02j, 129.0000+3.8147e-06j, -60.0000-1.0392e+02j]])

3.result-fft-(ab)-ifft-ifftshift:

tensor([[210.+0.j, 201.+0.j, 210.+0.j],

[129.+0.j, 120.+0.j, 129.+0.j],

[210.+0.j, 201.+0.j, 210.+0.j]])

4.result-fft-fftshift-(ab)-ifft:

tensor([[ 210.0000+0.0000e+00j, -105.0000+1.8187e+02j, -100.5000-1.7407e+02j],

[-105.0000+1.8187e+02j, -105.0000-1.8187e+02j, 201.0000-7.6294e-06j],

[ -64.5000-1.1172e+02j, 129.0000-3.8147e-06j, -60.0000+1.0392e+02j]])

5.result-fft-fftshift-(ab)-ifftshift-ifft:

tensor([[210.+0.j, 210.+0.j, 201.+0.j],

[210.+0.j, 210.+0.j, 201.+0.j],

[129.+0.j, 129.+0.j, 120.+0.j]])

6.result-fft-fftshift-(ab)-ifft-ifftshift:

tensor([[-105.0000-1.8187e+02j, 201.0000-7.6294e-06j, -105.0000+1.8187e+02j],

[ 129.0000-3.8147e-06j, -60.0000+1.0392e+02j, -64.5000-1.1172e+02j],

[-105.0000+1.8187e+02j, -100.5000-1.7407e+02j, 210.0000+0.0000e+00j]])

7.result-fftshift-fft-(ab)-ifft:

tensor([[210.+0.j, 201.+0.j, 210.+0.j],

[129.+0.j, 120.+0.j, 129.+0.j],

[210.+0.j, 201.+0.j, 210.+0.j]])

8.result-fftshift-fft-(ab)-ifftshift-ifft:

tensor([[ 210.0000+0.0000e+00j, -100.5000-1.7407e+02j, -105.0000+1.8187e+02j],

[ -64.5000-1.1172e+02j, -60.0000+1.0392e+02j, 129.0000-3.8147e-06j],

[-105.0000+1.8187e+02j, 201.0000-7.6294e-06j, -105.0000-1.8187e+02j]])

9.result-fftshift-fft-(ab)-ifft-ifftshift:

tensor([[120.+0.j, 129.+0.j, 129.+0.j],

[201.+0.j, 210.+0.j, 210.+0.j],

[201.+0.j, 210.+0.j, 210.+0.j]])

all is wrong be due to different from the actual convolution operation need pad zero

对空域原始图像进行补零

1.result_pad-fft-(ab)-ifft:

tensor([[-5.8964e-08+2.1684e-08j, -2.1942e-07+3.4234e-09j,

-1.6008e-07-6.6212e-08j],

[-6.9728e-08+1.3858e-07j, -2.2586e-07+8.7456e-08j,

-1.8794e-07-2.5025e-08j],

[-1.1340e-07+1.5226e-07j, -1.6811e-07+8.2379e-08j,

-8.9968e-08-5.6724e-08j]])

2.result_pad-fft-(ab)-ifftshift-ifft:

tensor([[-1.3591e-08+8.3625e-08j, -1.3870e-07-8.8931e-08j,

1.1840e-07+1.7747e-07j],

[-5.2241e-08+1.2235e-07j, 1.7944e-07-7.4823e-08j,

-1.1728e-07-1.6430e-08j],

[ 3.9962e-08-1.4667e-07j, -1.0263e-07+6.7573e-08j,

1.4530e-08+5.6865e-08j]])

3.result_pad-fft-(ab)-ifft-ifftshift:

tensor([[ 14.0000+2.0093e-07j, 35.0000+7.8955e-08j, 38.0000-4.8176e-07j],

[ 57.0000+6.9471e-07j, 120.0000-5.9769e-07j, 111.0000-6.6666e-07j],

[110.0000-1.4929e-06j, 197.0000-6.5903e-07j, 158.0000-1.7279e-06j]])

4.result_pad-fft-fftshift-(ab)-ifft:

tensor([[-1.1229e-08+6.8029e-08j, -1.4203e-07-7.1366e-08j,

1.1801e-07+1.5188e-07j],

[-7.8902e-08+1.3160e-07j, 1.9774e-07-8.8659e-08j,

-1.2857e-07+3.8880e-09j],

[ 4.3324e-08-1.2204e-07j, -1.1573e-07+5.5895e-08j,

4.3920e-08+5.3604e-08j]])

5.result_pad-fft-fftshift-(ab)-ifftshift-ifft:

tensor([[-5.8964e-08+2.1684e-08j, -2.1942e-07+3.4234e-09j,

-1.6008e-07-6.6212e-08j],

[-6.9728e-08+1.3858e-07j, -2.2586e-07+8.7456e-08j,

-1.8794e-07-2.5025e-08j],

[-1.1340e-07+1.5226e-07j, -1.6811e-07+8.2379e-08j,

-8.9968e-08-5.6724e-08j]])

6.result_pad-fft-fftshift-(ab)-ifft-ifftshift:

tensor([[ 13.9732+8.6609e-01j, -34.9623-1.6244e+00j, 37.9818+1.1760e+00j],

[ -56.9386-2.6454e+00j, 119.9425+3.7136e+00j, -110.9867-1.7177e+00j],

[ 109.9473+3.4041e+00j, -196.9764-3.0486e+00j, 158.0000-2.6748e-06j]])

7.result_pad-fftshift-fft-(ab)-ifft:

tensor([[9.7335e-08+4.7548e-08j, 1.5600e-07+3.4149e-08j, 2.1271e-07+1.4801e-08j],

[1.3279e-07+1.7300e-07j, 1.8891e-07+1.5591e-07j, 2.2441e-07+1.1166e-07j],

[1.5882e-08+4.6231e-08j, 9.1089e-08-1.6672e-08j, 1.0897e-07+5.8071e-09j]])

8.result_pad-fftshift-fft-(ab)-ifftshift-ifft:

tensor([[-3.9915e-08-8.2888e-08j, 7.2405e-08+5.6737e-08j,

-1.3725e-07-6.1253e-09j],

[ 1.8420e-07+8.3736e-08j, -2.4098e-07-1.1846e-07j,

2.5491e-07+7.5711e-08j],

[-1.0708e-07-1.2528e-07j, 2.0488e-07+9.8290e-08j,

-1.9498e-07-5.8708e-08j]])

9.result_pad-fftshift-fft-(ab)-ifft-ifftshift:

tensor([[120.0000+1.8112e-06j, 111.0000+2.2686e-06j, 72.0000+5.2352e-07j],

[197.0000+4.2891e-06j, 158.0000+2.2339e-06j, 93.0000+1.1955e-06j],

[166.0000+2.6483e-06j, 127.0000+2.0043e-06j, 72.0000+3.3807e-07j]])

only 3 is right operation and 6 amplitude is right but phase is wrong

complex de conv:

[[-1.07580643-0.71033082j -0.0632657 -0.62569138j -0.14987516+0.17276975j]

[-0.99139409-1.26810433j 0.64639005-0.5293978j 0.45730436-0.17167671j]

[-1.09194184-0.25787399j 1.07932259-0.40541701j 1.06696421-0.177788j ]]

result-fft-(de)-ifft-ifftshift:

tensor([[-1.0758-0.7103j, -0.0633-0.6257j, -0.1499+0.1728j],

[-0.9914-1.2681j, 0.6464-0.5294j, 0.4573-0.1717j],

[-1.0919-0.2579j, 1.0793-0.4054j, 1.0670-0.1778j]],

dtype=torch.complex128)

"""

第一,数据不补零直接进行 运算再频域乘法与

的结果将不一致,原因不是

无法实现卷积定理而是数据进行空域

时为了保持数据尺寸不变通常会先补一圈一半卷积核尺寸的零,因此数据进行

运算前也需要先补一圈一半卷积核尺寸的零结果才会一致,也可补一圈超过一半卷积核尺寸的零,运算后切取目标区域即可(后者类似用包含前者的父操作间接得到目标结果,需要注意前者与后者的运算过程中的频域不同仅最终可得到相同结果)

第二,空域卷积定理实现的严格操作顺序是,先进行傅立叶变换 ,接着进行频域点乘,然后进行傅立叶反变换

,最后进行平移

(操作的定义域为实数与复数域)

图像测试

- 调用的库

import torch

from PIL import Image

from scipy.signal import convolve

from torchvision import transforms

import matplotlib.pyplot as plot

- 测试代码

# 加载图像

path = r'/Users/WangHao/工作/纳米光子中心/全光相关/实验-0303/0303.png'

img = Image.open(path).convert('L')

img = transforms.Compose([transforms.ToTensor(), transforms.Resize((201, 201))])(img)

img = img.squeeze(0)

img_k = torch.fft.fft2(img)

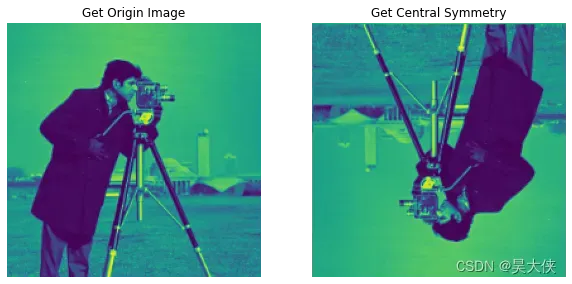

# 傅立叶变换与逆变换

img_1 = torch.fft.ifft2(img_k)

# 连续两次傅立叶变换等价原图中心对称

img_2 = torch.fft.fft2(img_k)

plot.figure("contrast", figsize=(10,20))

plot.subplot(121)

plot.imshow(img_1.abs())

plot.axis("off")

plot.title("Get Origin Image")

plot.subplot(122)

plot.imshow(img_2.abs())

plot.axis("off")

plot.title("Get Central Symmetry")

# 比较空域卷积与频域卷积

a = torch.rand(3,3)

# a = torch.rand((3,3), dtype=torch.complex128)

# a = torch.randn(3,3)

# a = torch.randn((3,3), dtype=torch.complex128)

img_1 = torch.nn.functional.pad(img, [1,1,1,1], value=0)

img_k_1 = torch.fft.fft2(img_1)

b = torch.nn.functional.pad(a, [100,100,100,100], value=0)

b_k_1 = torch.fft.fft2(b)

b_k_1_conj = torch.fft.fft2(b.reshape(-1).flipud().reshape(203, 203))

# 卷积运算

result1 = torch.tensor(convolve(img_1, a, 'same'))

# 相关运算

result2 = torch.conv2d(img_1.unsqueeze(0).unsqueeze(0), a.unsqueeze(0).unsqueeze(0), padding=1).squeeze(0).squeeze(0)

# 卷积运算

result3 = torch.fft.ifftshift(torch.fft.ifft2(img_k_1 * b_k_1))

# 相关运算

result4 = torch.fft.ifftshift(torch.fft.ifft2(img_k_1 * b_k_1_conj))

error1 = result1.abs() - result3.abs()

error2 = result2.abs() - result4.abs()

plot.figure(0)

plot.subplot(221)

plot.imshow(img)

plot.axis("off")

plot.subplot(222)

plot.imshow(img_1)

plot.axis("off")

plot.subplot(223)

plot.imshow(a)

plot.axis("off")

plot.subplot(224)

plot.imshow(b)

plot.axis("off")

plot.figure(1)

plot.subplot(121)

plot.imshow(result1.abs())

plot.axis("off")

plot.colorbar()

plot.subplot(122)

plot.imshow(result2.abs())

plot.axis("off")

plot.colorbar()

plot.figure(2)

plot.subplot(121)

plot.imshow(result3.abs())

plot.axis("off")

plot.colorbar()

plot.subplot(122)

plot.imshow(result3.angle())

plot.axis("off")

plot.colorbar()

plot.figure(3)

plot.subplot(121)

plot.imshow(result4.abs())

plot.axis("off")

plot.colorbar()

plot.subplot(122)

plot.imshow(result4.angle())

plot.axis("off")

plot.colorbar()

plot.figure(4)

plot.subplot(121)

plot.imshow(error1)

plot.axis("off")

plot.colorbar()

plot.subplot(122)

plot.imshow(error2)

plot.axis("off")

plot.colorbar()

- 小结

第一,通过快速傅立叶变换运算可以实现图像的卷积运算。以图像尺寸为 254 卷积核尺寸为 3 的情况为例,卷积运算 的计算复杂度

而快速傅立叶变换

的计算复杂度

处于同一量级,但是卷积运算的计算复杂度会随着卷积核变大而成倍增加,若此例中卷积核尺寸为 9 倍则

大过快速傅立叶变换一个量级

第二,实域非恒正卷积核的通过傅立叶变换实现卷机得到的结果将存在不为零的相位,因为傅立叶变换有系统误差结果带有极小的虚部, 当复数的幅度为负数时复数的相位就会非常接近 或

而不是零

文章出处登录后可见!