1:输入端

(1)Mosaic数据增强

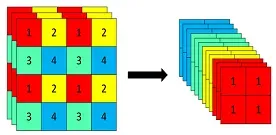

Yolov5的输入端采用了和Yolov4一样的Mosaic数据增强的方式。Mosaic是参考2019年底提出的CutMix数据增强的方式,但CutMix只使用了两张图片进行拼接,而Mosaic数据增强则采用了4张图片,随机缩放、裁剪、排布再进行拼接。

优点:丰富数据集,随机使用4张图片,裁剪缩放后再随机分布进行拼接,大大丰富了检测数据集,特别是随机缩放增加了很多小目标,让网络的鲁棒性更好。

def load_image(self, index):

# loads 1 image from dataset, returns img, original hw, resized hw

img = self.imgs[index]

if img is None: # not cached

path = self.img_files[index]

img = cv2.imread(path) # BGR

assert img is not None, 'Image Not Found ' + path

h0, w0 = img.shape[:2] # orig hw

r = self.img_size / max(h0, w0) # ratio

if r != 1: # if sizes are not equal

img = cv2.resize(img, (int(w0 * r), int(h0 * r)),

interpolation=cv2.INTER_AREA if r < 1 and not self.augment else cv2.INTER_LINEAR)

return img, (h0, w0), img.shape[:2] # img, hw_original, hw_resized

else:

return self.imgs[index], self.img_hw0[index], self.img_hw[index] # img, hw_original, hw_resized

def load_mosaic(self, index):

# loads images in a 4-mosaic

labels4, segments4 = [], []

s = self.img_size

# x = -s/2 (s/2, 3s/2) = (320, 960)

yc, xc = [int(random.uniform(-x, 2 * s + x)) for x in self.mosaic_border] # mosaic center x, y 假设取到了(500, 600)

indices = [index] + random.choices(self.indices, k=3) # 3 additional image indices

for i, index in enumerate(indices):

# Load image 输入如果是(1080,1920),则输出是(320, 640)

img, _, (h, w) = load_image(self, index)

# place img in img4

if i == 0: # top left

img4 = np.full((s * 2, s * 2, img.shape[2]), 114, dtype=np.uint8) # base image with 4 tiles 灰度图

x1a, y1a, x2a, y2a = max(xc - w, 0), max(yc - h, 0), xc, yc # xmin, ymin, xmax, ymax (large image) 0 0 600 500

x1b, y1b, x2b, y2b = w - (x2a - x1a), h - (y2a - y1a), w, h # xmin, ymin, xmax, ymax (small image) 40 140 640 640

elif i == 1: # top right

x1a, y1a, x2a, y2a = xc, max(yc - h, 0), min(xc + w, s * 2), yc

x1b, y1b, x2b, y2b = 0, h - (y2a - y1a), min(w, x2a - x1a), h

elif i == 2: # bottom left

x1a, y1a, x2a, y2a = max(xc - w, 0), yc, xc, min(s * 2, yc + h)

x1b, y1b, x2b, y2b = w - (x2a - x1a), 0, w, min(y2a - y1a, h)

elif i == 3: # bottom right

x1a, y1a, x2a, y2a = xc, yc, min(xc + w, s * 2), min(s * 2, yc + h)

x1b, y1b, x2b, y2b = 0, 0, min(w, x2a - x1a), min(y2a - y1a, h)

img4[y1a:y2a, x1a:x2a] = img[y1b:y2b, x1b:x2b] # img4[ymin:ymax, xmin:xmax]

# 计算小图到大图上时所产生的偏移,用来计算mosaic增强后的标签的位置

padw = x1a - x1b

padh = y1a - y1b

# Labels

labels, segments = self.labels[index].copy(), self.segments[index].copy()

if labels.size:

# 根据偏移量更新目标框位置

labels[:, 1:] = xywhn2xyxy(labels[:, 1:], w, h, padw, padh) # normalized xywh to pixel xyxy format

segments = [xyn2xy(x, w, h, padw, padh) for x in segments]

labels4.append(labels)

segments4.extend(segments)

# Concat/clip labels

labels4 = np.concatenate(labels4, 0)

# 防止越界 label[:, 1:]中的所有元素的值(位置信息)必须在[0, 2*s]之间,小于0就令其等于0,大于2*s就等于2*s out: 返回

for x in (labels4[:, 1:], *segments4):

np.clip(x, 0, 2 * s, out=x) # clip when using random_perspective()

# img4, labels4 = replicate(img4, labels4) # replicate

# Augment segments4为[],不进行操作

img4, labels4, segments4 = copy_paste(img4, labels4, segments4, p=self.hyp['copy_paste'])

# 对mosaic整合后的图片进行随机旋转、平移、缩放、错切、透视变换,并resize为输入大小img_size [1280, 1280, 3] => [640, 640, 3]

# 见yolov5数据增强

img4, labels4 = random_perspective(img4, labels4, segments4,

degrees=self.hyp['degrees'],

translate=self.hyp['translate'],

scale=self.hyp['scale'],

shear=self.hyp['shear'],

perspective=self.hyp['perspective'],

border=self.mosaic_border) # border to remove

return img4, labels4自己简单跑了一下,(●’◡’●)

(2)自适应图片缩放

在常用的目标检测算法中,不同的图片长宽都不相同,因此常用的方式是将原始图片统一缩放到一个标准尺寸,再送入检测网络中,比如Yolo算法中常用640*640等尺寸。

在项目实际使用时,很多图片的长宽比不同,因此缩放填充后,两端的黑边大小都不同,而如果填充的比较多,则存在信息冗余,影响推理速度。因此在Yolov5的代码中datasets.py的letterbox函数中进行了修改,对原始图像自适应的添加最少的黑边(只在推理时使用)。

计算原理见:深入浅出Yolo系列之Yolov5核心基础知识完整讲解 – 知乎 (zhihu.com)

def letterbox(im, new_shape=(640, 640), color=(114, 114, 114), auto=True, scaleFill=False, scaleup=True, stride=32):

# Resize and pad image while meeting stride-multiple constraints

shape = im.shape[:2] # current shape [height, width] [1080, 1920]

if isinstance(new_shape, int):

new_shape = (new_shape, new_shape)

# Scale ratio (new / old)

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1]) # 1/3

if not scaleup: # only scale down, do not scale up (for better val mAP)

r = min(r, 1.0)

# Compute padding

ratio = r, r # width, height ratios

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r)) # [640, 360]

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # wh padding [0, 280]

if auto: # minimum rectangle

dw, dh = np.mod(dw, stride), np.mod(dh, stride) # wh padding 0, 24

elif scaleFill: # stretch

dw, dh = 0.0, 0.0

new_unpad = (new_shape[1], new_shape[0])

ratio = new_shape[1] / shape[1], new_shape[0] / shape[0] # width, height ratios

dw /= 2 # divide padding into 2 sides 0

dh /= 2 # 12

if shape[::-1] != new_unpad: # resize

im = cv2.resize(im, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1)) # 12, 12

left, right = int(round(dw - 0.1)), int(round(dw + 0.1)) # 0, 0

im = cv2.copyMakeBorder(im, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # add border

return im, ratio, (dw, dh)(3)自适应锚框计算

在Yolo算法中,针对不同的数据集,都会有初始设定长宽的锚框。在网络训练中,网络在初始锚框的基础上输出预测框,进而和真实框groundtruth进行比对,计算两者差距,再反向更新,迭代网络参数。

在Yolov3、Yolov4中,训练不同的数据集时,计算初始锚框的值是通过单独的程序k-means聚类运行的。但Yolov5中将此功能嵌入到代码中,每次训练时,自适应的计算不同训练集中的最佳锚框值。

代码位于utils/autoanchor.py

可参考使用k-means聚类anchors以及【YOLOV5-5.x 源码解读】autoanchor.py进行阅读

# Auto-anchor utils

import random

import numpy as np

import torch

import yaml

from tqdm import tqdm

from utils.general import colorstr

def check_anchor_order(m):

# Check anchor order against stride order for YOLOv5 Detect() module m, and correct if necessary

a = m.anchor_grid.prod(-1).view(-1) # anchor area

da = a[-1] - a[0] # delta a 计算最大anchor与最小anchor面积差

ds = m.stride[-1] - m.stride[0] # delta s 计算最大stride与最小stride差

# 如果这里anchor与stride顺序不一致,则进行翻转

if da.sign() != ds.sign(): # same order # torch.sign(x):当x大于/小于0时,返回1/-1

print('Reversing anchor order')

m.anchors[:] = m.anchors.flip(0)

m.anchor_grid[:] = m.anchor_grid.flip(0)

def check_anchors(dataset, model, thr=4.0, imgsz=640):

# Check anchor fit to data, recompute if necessary

prefix = colorstr('autoanchor: ')

print(f'\n{prefix}Analyzing anchors... ', end='')

m = model.module.model[-1] if hasattr(model, 'module') else model.model[-1] # Detect()

shapes = imgsz * dataset.shapes / dataset.shapes.max(1, keepdims=True) # 将所有图片的最大边长缩放到imgsz

scale = np.random.uniform(0.9, 1.1, size=(shapes.shape[0], 1)) # augment scale

wh = torch.tensor(np.concatenate([l[:, 3:5] * s for s, l in zip(shapes * scale, dataset.labels)])).float() # wh 将宽高转化成绝对坐标

def metric(k): # compute metric

r = wh[:, None] / k[None]

x = torch.min(r, 1. / r).min(2)[0] # ratio metric

best = x.max(1)[0] # best_x

aat = (x > 1. / thr).float().sum(1).mean() # anchors above threshold

bpr = (best > 1. / thr).float().mean() # best possible recall = 最多能被召回(大于0.25)的gt框数量 / 所有gt框数量

return bpr, aat

anchors = m.anchor_grid.clone().cpu().view(-1, 2) # current anchors

bpr, aat = metric(anchors)

print(f'anchors/target = {aat:.2f}, Best Possible Recall (BPR) = {bpr:.4f}', end='')

if bpr < 0.98: # threshold to recompute 小于0.98,会用k-means计算anchor

print('. Attempting to improve anchors, please wait...')

na = m.anchor_grid.numel() // 2 # number of anchors

try:

anchors = kmean_anchors(dataset, n=na, img_size=imgsz, thr=thr, gen=1000, verbose=False)

except Exception as e:

print(f'{prefix}ERROR: {e}')

new_bpr = metric(anchors)[0]

if new_bpr > bpr: # replace anchors

anchors = torch.tensor(anchors, device=m.anchors.device).type_as(m.anchors)

m.anchor_grid[:] = anchors.clone().view_as(m.anchor_grid) # for inference [9, 2] -> [3, 1, 3, 1, 1, 2]

m.anchors[:] = anchors.clone().view_as(m.anchors) / m.stride.to(m.anchors.device).view(-1, 1, 1) # loss [9, 2] -> [3, 3, 2]

check_anchor_order(m) # 检查anchor顺序和stride顺序是否一致 不一致就调整

print(f'{prefix}New anchors saved to model. Update model *.yaml to use these anchors in the future.')

else:

print(f'{prefix}Original anchors better than new anchors. Proceeding with original anchors.')

print('') # newline

def kmean_anchors(dataset='./data/coco128.yaml', n=9, img_size=640, thr=4.0, gen=1000, verbose=True):

""" Creates kmeans-evolved anchors from training dataset

Arguments:

dataset: path to data.yaml, or a loaded dataset

n: number of anchors

img_size: image size used for training

thr: anchor-label wh ratio threshold hyperparameter hyp['anchor_t'] used for training, default=4.0

gen: generations to evolve anchors using genetic algorithm

verbose: print all results

Return:

k: kmeans evolved anchors

Usage:

from utils.autoanchor import *; _ = kmean_anchors()

"""

from scipy.cluster.vq import kmeans

thr = 1. / thr

prefix = colorstr('autoanchor: ')

def metric(k, wh): # compute metrics

# [N,1,2]/[1,9,2],使用广播机制,[N,1,20]在1维度上复制9次,[1,9,2]在1维度上复制N次,全部变成[N,9,2]维度

r = wh[:, None] / k[None] #[N,9,2],N个bounding box与9个anchor的宽比和高比,越接近1,越重合

# torch.min(r, 1. / r)统一转换到1的左边,.min(2)得到宽比和高比中的最小值,即匹配程度最差的边(重合度最低的边),[0]表示取值

x = torch.min(r, 1. / r).min(2)[0] # ratio metric [N,9]

# x = wh_iou(wh, torch.tensor(k)) # iou metric

# x.max(1),在1维度,即9这个维度上取最大值,得到每个bounding box与它重合度最高的anchor的最不匹配的边长比例,[N]

return x, x.max(1)[0] # x, best_x

def anchor_fitness(k): # mutation fitness

_, best = metric(torch.tensor(k, dtype=torch.float32), wh)

return (best * (best > thr).float()).mean() # fitness

def print_results(k):

k = k[np.argsort(k.prod(1))] # sort small to large

x, best = metric(k, wh0)

bpr, aat = (best > thr).float().mean(), (x > thr).float().mean() * n # best possible recall, anch > thr

print(f'{prefix}thr={thr:.2f}: {bpr:.4f} best possible recall, {aat:.2f} anchors past thr')

print(f'{prefix}n={n}, img_size={img_size}, metric_all={x.mean():.3f}/{best.mean():.3f}-mean/best, '

f'past_thr={x[x > thr].mean():.3f}-mean: ', end='')

for i, x in enumerate(k):

print('%i,%i' % (round(x[0]), round(x[1])), end=', ' if i < len(k) - 1 else '\n') # use in *.cfg

return k

if isinstance(dataset, str): # *.yaml file

with open(dataset, encoding='ascii', errors='ignore') as f:

data_dict = yaml.safe_load(f) # model dict

from utils.datasets import LoadImagesAndLabels

dataset = LoadImagesAndLabels(data_dict['train'], augment=True, rect=True)

# Get label wh

shapes = img_size * dataset.shapes / dataset.shapes.max(1, keepdims=True) # 将所有图片的最大边长缩放到imgsz,较小边相应缩放

wh0 = np.concatenate([l[:, 3:5] * s for s, l in zip(shapes, dataset.labels)]) # wh 将宽高转化成绝对坐标

# Filter

i = (wh0 < 3.0).any(1).sum() # 宽或高小于3个像素

if i:

print(f'{prefix}WARNING: Extremely small objects found. {i} of {len(wh0)} labels are < 3 pixels in size.')

wh = wh0[(wh0 >= 2.0).any(1)] # filter > 2 pixels 将宽和高都大于2个像素的目标筛选出来

# wh = wh * (np.random.rand(wh.shape[0], 1) * 0.9 + 0.1) # multiply by random scale 0-1

# Kmeans calculation

print(f'{prefix}Running kmeans for {n} anchors on {len(wh)} points...')

# 进行白化操作,对每一个数据做一个标准差归一化处理(除以标准差),去除输入数据的冗余信息

s = wh.std(0) # sigmas for whitening # 标准差

k, dist = kmeans(wh / s, n, iter=30) # points, mean distance

assert len(k) == n, print(f'{prefix}ERROR: scipy.cluster.vq.kmeans requested {n} points but returned only {len(k)}')

k *= s # 还原

wh = torch.tensor(wh, dtype=torch.float32) # filtered

wh0 = torch.tensor(wh0, dtype=torch.float32) # unfiltered

k = print_results(k)

# Plot

# k, d = [None] * 20, [None] * 20

# for i in tqdm(range(1, 21)):

# k[i-1], d[i-1] = kmeans(wh / s, i) # points, mean distance

# fig, ax = plt.subplots(1, 2, figsize=(14, 7), tight_layout=True)

# ax = ax.ravel()

# ax[0].plot(np.arange(1, 21), np.array(d) ** 2, marker='.')

# fig, ax = plt.subplots(1, 2, figsize=(14, 7)) # plot wh

# ax[0].hist(wh[wh[:, 0]<100, 0],400)

# ax[1].hist(wh[wh[:, 1]<100, 1],400)

# fig.savefig('wh.png', dpi=200)

# Evolve

npr = np.random

f, sh, mp, s = anchor_fitness(k), k.shape, 0.9, 0.1 # fitness, generations, mutation prob, sigma

pbar = tqdm(range(gen), desc=f'{prefix}Evolving anchors with Genetic Algorithm:') # progress bar

for _ in pbar:

v = np.ones(sh) # [9,2],代表变异系数

while (v == 1).all(): # mutate until a change occurs (prevent duplicates)

# npr.random(sh) < mp,以90%的比例选取,被选中的为1;* random.random(),在1的位置上变异,乘以一个随机数(0,1)

# npr.randn,标准正态分布,* s,调整标准差,+1均值变为1

v = ((npr.random(sh) < mp) * random.random() * npr.randn(*sh) * s + 1).clip(0.3, 3.0)

kg = (k.copy() * v).clip(min=2.0) # 变异之后新的anchor, .clip(min=2.0)防止宽或高小于2个像素

fg = anchor_fitness(kg)

if fg > f:

f, k = fg, kg.copy()

pbar.desc = f'{prefix}Evolving anchors with Genetic Algorithm: fitness = {f:.4f}'

if verbose:

print_results(k)

return print_results(k)

2:Backbone

(1)切片操作(Focus)

以Yolov5s的结构为例,原始640*640*3的图像输入Focus结构,采用切片操作,变成320*320*12的特征图,再经过一次卷积操作,变成320*320*32的特征图。

优点:在减少特征信息损失的情况下,实现下采样(对mAP影响很小)。此外,用一个Focus层替换原来v3中的3个卷积层,参数量、浮点数都减少了,实现了提速。

代码:位于common.py

class Focus(nn.Module):

# Focus wh information into c-space

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, act=True): # ch_in, ch_out, kernel, stride, padding, groups

super().__init__()

self.conv = Conv(c1 * 4, c2, k, s, p, g, act)

# self.contract = Contract(gain=2)

def forward(self, x): # x(b,c,w,h) -> y(b,4c,w/2,h/2)

return self.conv(torch.cat([x[..., ::2, ::2], x[..., 1::2, ::2], x[..., ::2, 1::2], x[..., 1::2, 1::2]], 1))

# return self.conv(self.contract(x))x[…, ::2, ::2]中…是指前面所有的冒号:都用一个省略号来代替,第一个::2是指w从0开始,步长为2取值,第二个::2是指h从0开始,步长为2取值,合起来就是将上图三通道中的红色部分取出,其他同理。

torch.cat((B,C,H,W),1)是指在第一个维度,即通道方向上进行拼接。

YOLOv5在6.0版本后把网络的第一层(原来是Focus模块)换成了一个6*6大小的卷积层。两者在理论上其实等价的,但是对于现有的一些GPU设备(以及相应的优化算法)使用6*6大小的卷积层比使用Focus模块更加高效。

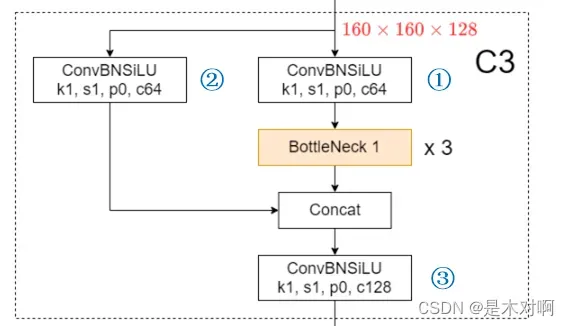

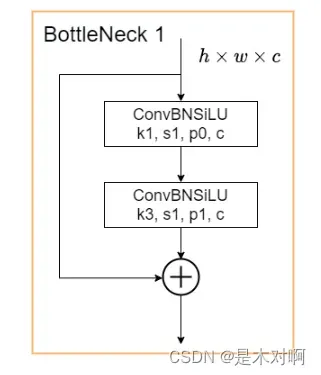

(2)CSPNet(Cross Stage Partial Network)

CSPNet的作者认为推理计算过高的问题是由于网络优化中的梯度信息重复导致的。因此采用CSP模块先将基础层的特征映射划分为两部分,然后通过跨阶段层次结构将它们合并,在减少了计算量的同时可以保证准确率。

该图来自于YOLOv5网络详解,感谢太阳花的小绿豆~

代码:位于common.py

class Bottleneck(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

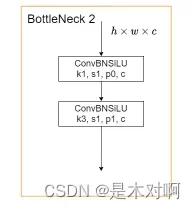

self.add = shortcut and c1 == c2 # 若为True,则为Bottleneck1,在Backbone使用,否则为Bottleneck2,在Neck中使用

def forward(self, x):

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C3(nn.Module):

# CSP Bottleneck with 3 convolutions

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1) # 对应上图中1

self.cv2 = Conv(c1, c_, 1, 1) # 对应上图中2

self.cv3 = Conv(2 * c_, c2, 1) # optional act=FReLU(c2) 对应上图中3

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

def forward(self, x):

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), 1))关于BottleneckCSPSPPF部分可参考YOLOv5中的CSP结构

3:Neck

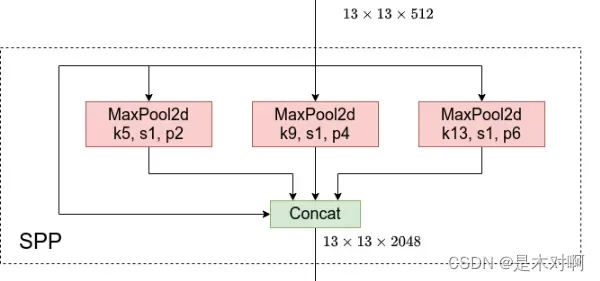

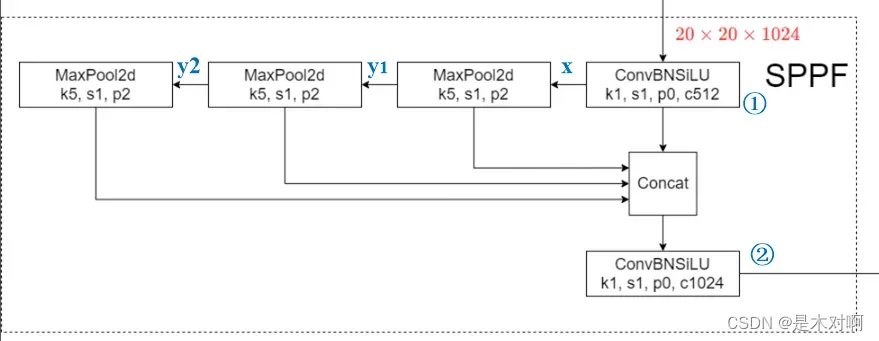

(1)将SPP换成了SPPF

SPP是将输入并行通过多个不同大小的MaxPool,然后做进一步融合,能在一定程度上解决目标多尺度问题。

代码:位于common.py

class SPP(nn.Module):

# Spatial Pyramid Pooling (SPP) layer https://arxiv.org/abs/1406.4729

def __init__(self, c1, c2, k=(5, 9, 13)):

super().__init__()

c_ = c1 // 2 # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_ * (len(k) + 1), c2, 1, 1)

self.m = nn.ModuleList([nn.MaxPool2d(kernel_size=x, stride=1, padding=x // 2) for x in k])

def forward(self, x):

x = self.cv1(x)

with warnings.catch_warnings():

warnings.simplefilter('ignore') # suppress torch 1.9.0 max_pool2d() warning

return self.cv2(torch.cat([x] + [m(x) for m in self.m], 1)) SPPF结构是将输入串行通过多个5*5大小的MaxPool层,最后将各个输出连接,与SPP相比,计算速度加快。

代码:位于common.py

class SPPF(nn.Module):

# Spatial Pyramid Pooling - Fast (SPPF) layer for YOLOv5 by Glenn Jocher

def __init__(self, c1, c2, k=5): # equivalent to SPP(k=(5, 9, 13))

super().__init__()

c_ = c1 // 2 # hidden channels

self.cv1 = Conv(c1, c_, 1, 1) # 对应上图1

self.cv2 = Conv(c_ * 4, c2, 1, 1) # 对应上图2

self.m = nn.MaxPool2d(kernel_size=k, stride=1, padding=k // 2)

def forward(self, x):

x = self.cv1(x)

with warnings.catch_warnings():

warnings.simplefilter('ignore') # suppress torch 1.9.0 max_pool2d() warning

y1 = self.m(x)

y2 = self.m(y1)

return self.cv2(torch.cat((x, y1, y2, self.m(y2)), 1))

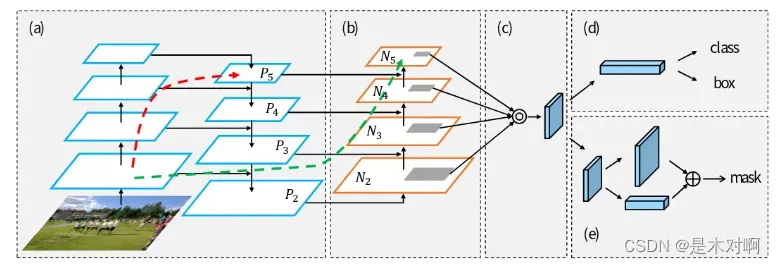

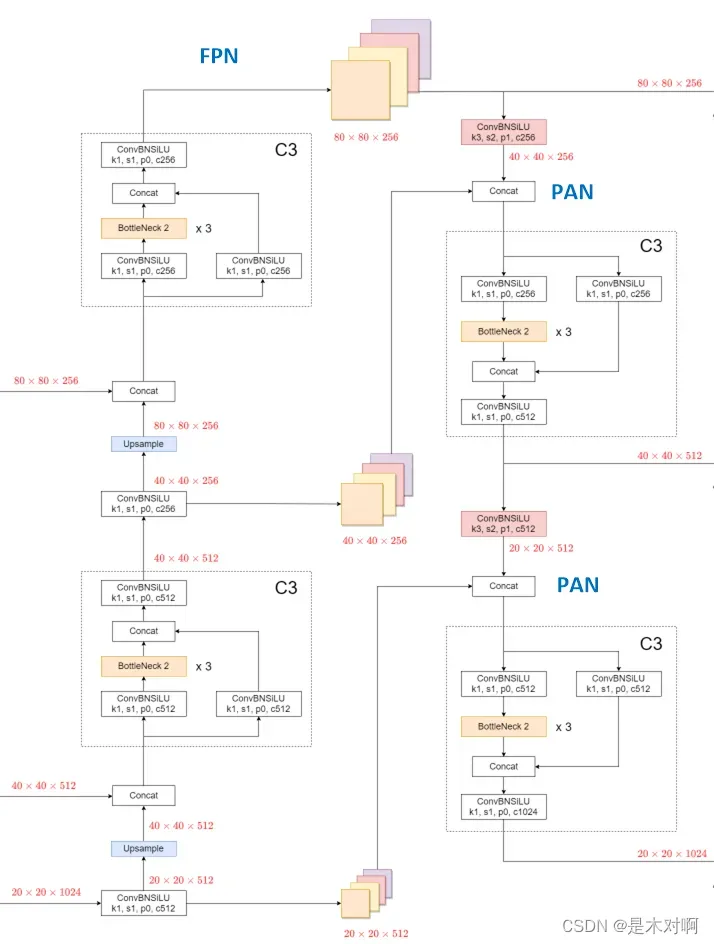

(2) 采用CSP+PAN(Path Augmentation Network)结构

PAN结构就是在FPN(从顶到底信息融合)的基础上加上了从底到顶的信息融合,如上图(b)所示。

如下图,不仅每个C3模块里含有CSP结构,在PAN结构中也加入了CSP。

FPN传递强语义信息(大目标更明确),PAN传递强定位信息(小目标更明确)。

4:输出

(1)损失函数

此处参考深入浅出Yolo系列之Yolov3&Yolov4&Yolov5&Yolox核心基础知识完整讲解

目标检测损失函数一般包括分类损失Classificition Loss+回归损失Regression Loss

回归损失的发展:Smooth L1 Loss-> IoU Loss(2016)-> GIoU Loss(2019)-> DIoU Loss(2020)->CIoU Loss(2020)

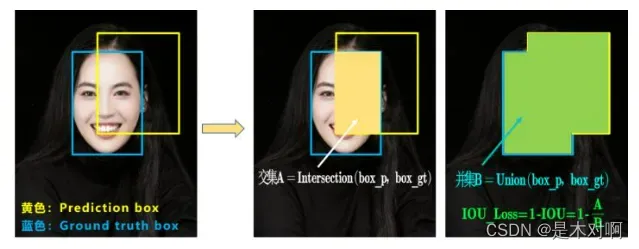

IOU_Loss:

两个框的交并比越大,挨得越近,损失越小。存在的问题:

问题1:即状态1的情况,当预测框和目标框不相交时,IOU=0,无法反应两个框距离的远近,此时损失函数不可导,IOU_Loss无法优化两个框不相交的情况。

问题2:即状态2和状态3的情况,当两个预测框大小相同,两个IOU也相同,IOU_Loss无法区分两者相交情况的不同。

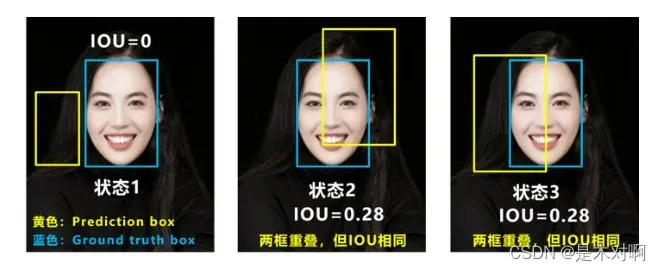

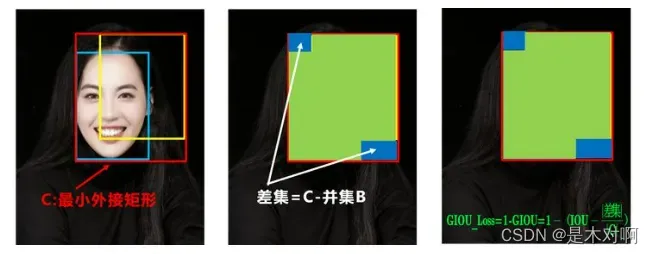

GIOU_Loss:

可以看出IOU越大,差集越小,损失越小。存在的问题:

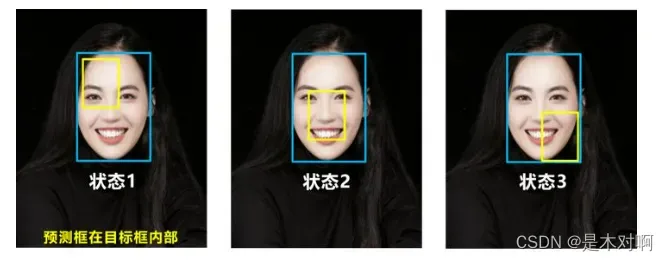

问题:状态1、2、3都是预测框在目标框内部且预测框大小一致的情况,这时预测框和目标框的差集都是相同的,因此这三种状态的GIOU值也都是相同的,这时GIOU退化成了IOU,无法区分相对位置关系。

基于这个问题,2020年的AAAI又提出了DIOU_Loss。

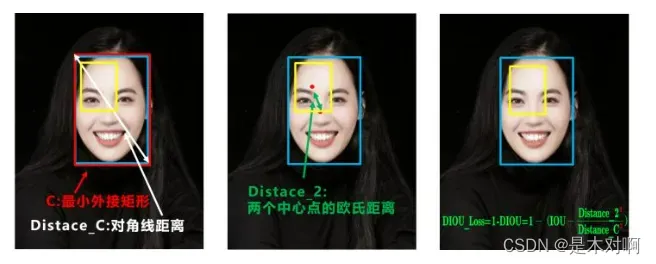

DIOU_Loss:

DIOU_Loss考虑了重叠面积和中心点距离,当目标框包裹预测框的时候,直接度量2个框的距离,因此DIOU_Loss收敛的更快,但是没有考虑到长宽比。

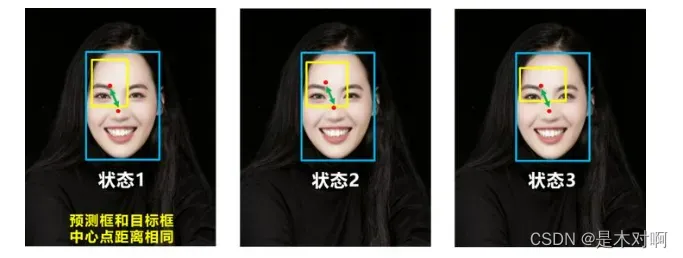

上面三种情况,目标框包裹预测框,本来DIOU_Loss可以起作用。但预测框的中心点的位置都是一样的,因此按照DIOU_Loss的计算公式,三者的值都是相同的。针对这个问题,又提出了CIOU_Loss。

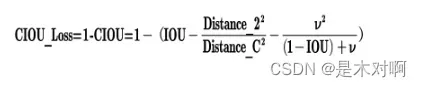

CIOU_Loss:

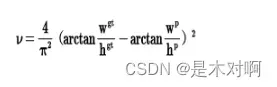

CIOU_Loss和DIOU_Loss前面的公式都是一样的,不过在此基础上还增加了一个影响因子,将预测框和目标框的长宽比都考虑了进去。

总结:

IOU_Loss:主要考虑检测框和目标框重叠面积。

GIOU_Loss:在IOU的基础上,解决边界框不重合时的问题。

DIOU_Loss:在IOU和GIOU的基础上,考虑边界框中心点距离的信息。

CIOU_Loss:在DIOU的基础上,考虑边界框宽高比的尺度信息。

YOLOv5的损失主要由三个部分组成:

- Classes loss,分类损失,采用的是BCE loss,只计算正样本的分类损失。

- Objectness loss,obj损失,采用的是BCE loss,这里的obj指的是网络预测的目标边界框与GT Box的CIoU,计算的是所有样本的obj损失。

- Location loss,定位损失,采用的是CIoU loss,只计算正样本的定位损失。

代码位于utils/loggers/loss.py,解析见YOLOv5输出端损失函数

文章出处登录后可见!