CSP-Darknet53

- 0. 引言

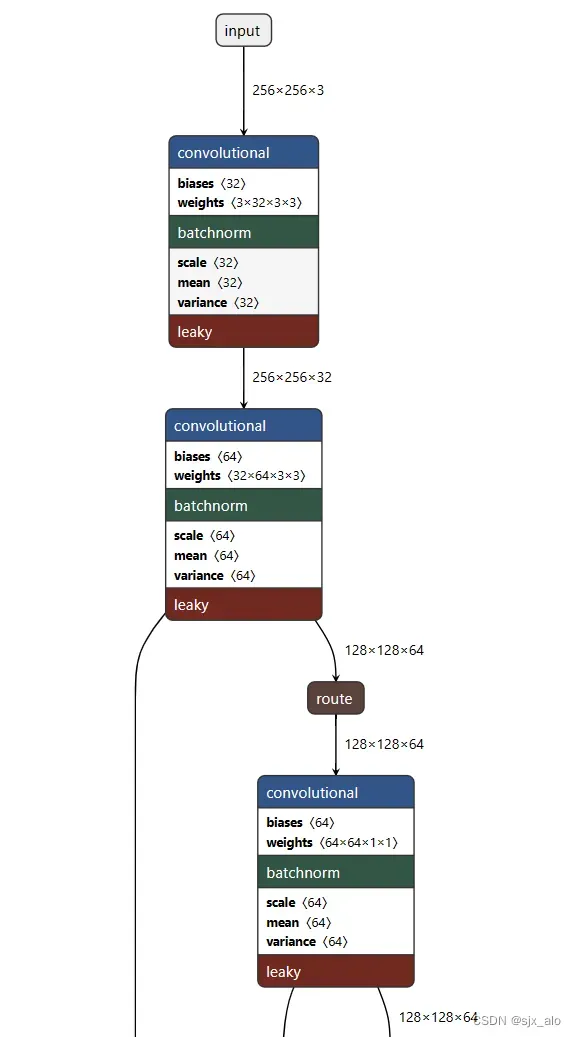

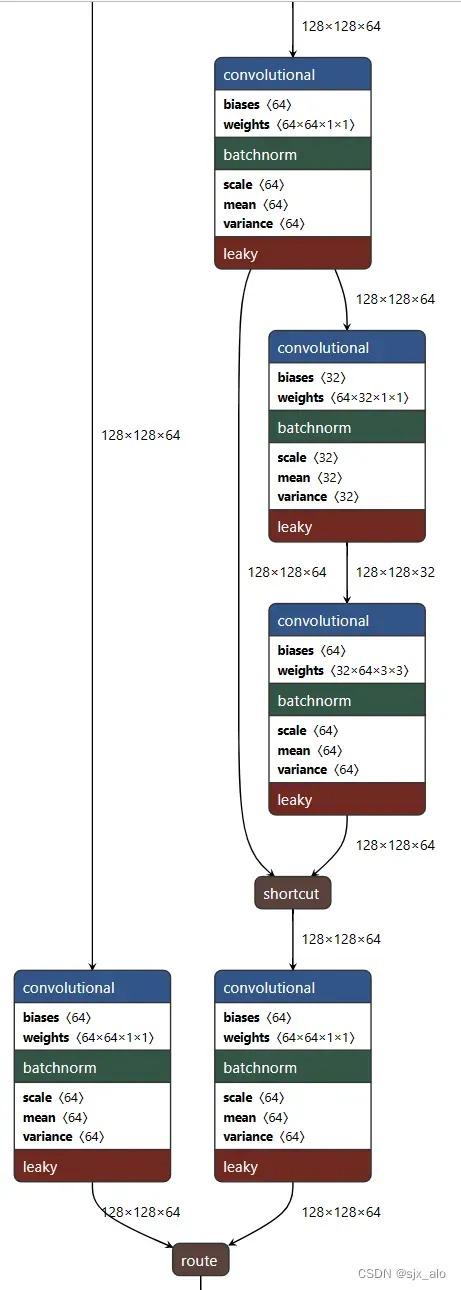

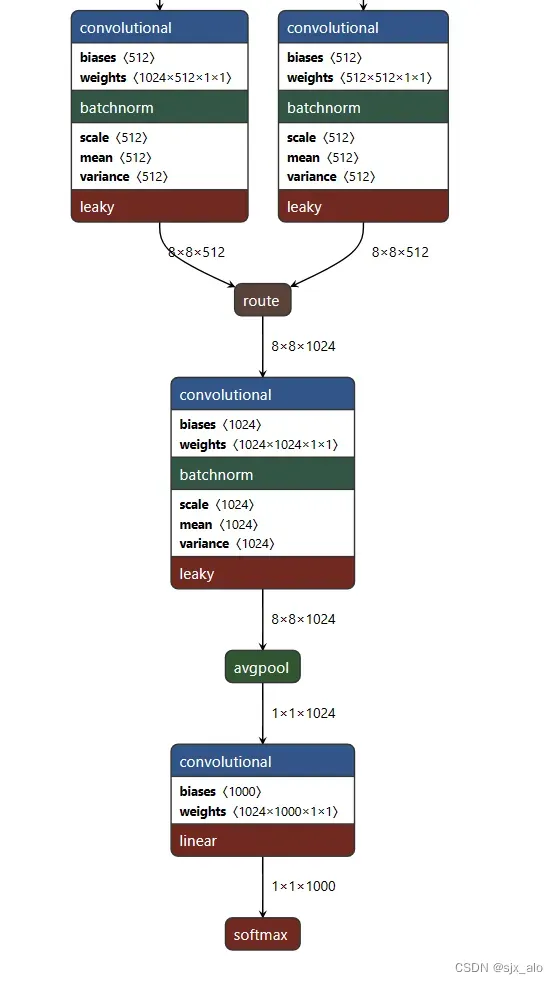

- 1. 网络结构图

- 1.1 输入部分

- 1.2 CSP部分结构

- 1.3 输出部分

- 2. 代码实现

- 2.1 代码整体实现

- 2.2 代码各个阶段实现

- 3. 代码测试

- 4. 结论

0. 引言

CSP-Darknet53无论是其作为CV Backbone,还是说它在别的数据集上取得极好的效果。与此同时,它与别的网络的适配能力极强。这些特点都在宣告:CSP-Darknet53的重要性。

关于原理部分的内容请查看这里CV 经典主干网络 (Backbone) 系列: CSPNet

1. 网络结构图

具体网络结构可以参考YOLO V3详解(一):网络结构介绍中使用的工具来进行操作。具体网址和对应的权重文件下载地址如下:

模型可视化工具:https://lutzroeder.github.io/netron/

cfg文件下载网址:https://github.com/WongKinYiu/CrossStagePartialNetworks

得到的部分网络结构图的如下所示。

1.1 输入部分

1.2 CSP部分结构

1.3 输出部分

2. 代码实现

2.1 代码整体实现

通过代码实现CSP-Darknet53。框架为PyTorch,代码整体框架实现如下所示:

class CsDarkNet53(nn.Module):

def __init__(self, num_classes):

super(CsDarkNet53, self).__init__()

input_channels = 32

# Network

self.stage1 = Conv2dBatchLeaky(3, input_channels, 3, 1, activation='mish')

self.stage2 = Stage2(input_channels)

self.stage3 = Stage3(4*input_channels)

self.stage4 = Stage(4*input_channels, 8)

self.stage5 = Stage(8*input_channels, 8)

self.stage6 = Stage(16*input_channels, 4)

self.conv = Conv2dBatchLeaky(32*input_channels, 32*input_channels, 1, 1, activation='mish')

self.avgpool = nn.AdaptiveAvgPool2d((1,1))

self.fc = nn.Linear(1024, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, (nn.BatchNorm2d, nn.GroupNorm)):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def forward(self, x):

stage1 = self.stage1(x)

stage2 = self.stage2(stage1)

stage3 = self.stage3(stage2)

stage4 = self.stage4(stage3)

stage5 = self.stage5(stage4)

stage6 = self.stage6(stage5)

conv = self.conv(stage6)

x = self.avgpool(conv)

x = x.view(-1, 1024)

x = self.fc(x)

return x

2.2 代码各个阶段实现

在代码中,对各个阶段的具体实现如下所示:

class Mish(nn.Module):

def __init__(self):

super(Mish, self).__init__()

def forward(self, x):

return x * torch.tanh(F.softplus(x))

class Conv2dBatchLeaky(nn.Module):

"""

This convenience layer groups a 2D convolution, a batchnorm and a leaky ReLU.

"""

def __init__(self, in_channels, out_channels, kernel_size, stride, activation='leaky', leaky_slope=0.1):

super(Conv2dBatchLeaky, self).__init__()

# Parameters

self.in_channels = in_channels

self.out_channels = out_channels

self.kernel_size = kernel_size

self.stride = stride

if isinstance(kernel_size, (list, tuple)):

self.padding = [int(k/2) for k in kernel_size]

else:

self.padding = int(kernel_size/2)

self.leaky_slope = leaky_slope

# self.mish = Mish()

# Layer

if activation == "leaky":

self.layers = nn.Sequential(

nn.Conv2d(self.in_channels, self.out_channels, self.kernel_size, self.stride, self.padding, bias=False),

nn.BatchNorm2d(self.out_channels),

nn.LeakyReLU(self.leaky_slope, inplace=True)

)

elif activation == "mish":

self.layers = nn.Sequential(

nn.Conv2d(self.in_channels, self.out_channels, self.kernel_size, self.stride, self.padding, bias=False),

nn.BatchNorm2d(self.out_channels),

Mish()

)

elif activation == "linear":

self.layers = nn.Sequential(

nn.Conv2d(self.in_channels, self.out_channels, self.kernel_size, self.stride, self.padding, bias=False)

)

def __repr__(self):

s = '{name} ({in_channels}, {out_channels}, kernel_size={kernel_size}, stride={stride}, padding={padding}, negative_slope={leaky_slope})'

return s.format(name=self.__class__.__name__, **self.__dict__)

def forward(self, x):

x = self.layers(x)

return x

class SmallBlock(nn.Module):

def __init__(self, nchannels):

super().__init__()

self.features = nn.Sequential(

Conv2dBatchLeaky(nchannels, nchannels, 1, 1, activation='mish'),

Conv2dBatchLeaky(nchannels, nchannels, 3, 1, activation='mish')

)

# conv_shortcut

'''

参考 https://github.com/bubbliiiing/yolov4-pytorch

shortcut后不接任何conv

'''

# self.active_linear = Conv2dBatchLeaky(nchannels, nchannels, 1, 1, activation='linear')

# self.conv_shortcut = Conv2dBatchLeaky(nchannels, nchannels, 1, 1, activation='mish')

def forward(self, data):

short_cut = data + self.features(data)

# active_linear = self.conv_shortcut(short_cut)

return short_cut

# Stage1 conv [256,256,3]->[256,256,32]

class Stage2(nn.Module):

def __init__(self, nchannels):

super().__init__()

# stage2 32

self.conv1 = Conv2dBatchLeaky(nchannels, 2*nchannels, 3, 2, activation='mish')

self.split0 = Conv2dBatchLeaky(2*nchannels, 2*nchannels, 1, 1, activation='mish')

self.split1 = Conv2dBatchLeaky(2*nchannels, 2*nchannels, 1, 1, activation='mish')

self.conv2 = Conv2dBatchLeaky(2*nchannels, nchannels, 1, 1, activation='mish')

self.conv3 = Conv2dBatchLeaky(nchannels, 2*nchannels, 3, 1, activation='mish')

self.conv4 = Conv2dBatchLeaky(2*nchannels, 2*nchannels, 1, 1, activation='mish')

def forward(self, data):

conv1 = self.conv1(data)

split0 = self.split0(conv1)

split1 = self.split1(conv1)

conv2 = self.conv2(split1)

conv3 = self.conv3(conv2)

shortcut = split1 + conv3

conv4 = self.conv4(shortcut)

route = torch.cat([split0, conv4], dim=1)

return route

class Stage3(nn.Module):

def __init__(self, nchannels):

super().__init__()

# stage3 128

self.conv1 = Conv2dBatchLeaky(nchannels, int(nchannels/2), 1, 1, activation='mish')

self.conv2 = Conv2dBatchLeaky(int(nchannels/2), nchannels, 3, 2, activation='mish')

self.split0 = Conv2dBatchLeaky(nchannels, int(nchannels/2), 1, 1, activation='mish')

self.split1 = Conv2dBatchLeaky(nchannels, int(nchannels/2), 1, 1, activation='mish')

self.block1 = SmallBlock(int(nchannels/2))

self.block2 = SmallBlock(int(nchannels/2))

self.conv3 = Conv2dBatchLeaky(int(nchannels/2), int(nchannels/2), 1, 1, activation='mish')

def forward(self, data):

conv1 = self.conv1(data)

conv2 = self.conv2(conv1)

split0 = self.split0(conv2)

split1 = self.split1(conv2)

block1 = self.block1(split1)

block2 = self.block2(block1)

conv3 = self.conv3(block2)

route = torch.cat([split0, conv3], dim=1)

return route

# Stage4 Stage5 Stage6

class Stage(nn.Module):

def __init__(self, nchannels, nblocks):

super().__init__()

# stage4 : 128

# stage5 : 256

# stage6 : 512

self.conv1 = Conv2dBatchLeaky(nchannels, nchannels, 1, 1, activation='mish')

self.conv2 = Conv2dBatchLeaky(nchannels, 2*nchannels, 3, 2, activation='mish')

self.split0 = Conv2dBatchLeaky(2*nchannels, nchannels, 1, 1, activation='mish')

self.split1 = Conv2dBatchLeaky(2*nchannels, nchannels, 1, 1, activation='mish')

blocks = []

for i in range(nblocks):

blocks.append(SmallBlock(nchannels))

self.blocks = nn.Sequential(*blocks)

self.conv4 = Conv2dBatchLeaky(nchannels, nchannels, 1, 1, activation='mish')

def forward(self,data):

conv1 = self.conv1(data)

conv2 = self.conv2(conv1)

split0 = self.split0(conv2)

split1 = self.split1(conv2)

blocks = self.blocks(split1)

conv4 = self.conv4(blocks)

route = torch.cat([split0, conv4], dim=1)

return route

3. 代码测试

下面使用一个小例子来对代码进行测试。

if __name__ == "__main__":

use_cuda = torch.cuda.is_available()

if use_cuda:

device = torch.device("cuda")

cudnn.benchmark = True

else:

device = torch.device("cpu")

darknet = CsDarkNet53(num_classes=10)

darknet = darknet.cuda()

with torch.no_grad():

darknet.eval()

data = torch.rand(1, 3, 256, 256)

data = data.cuda()

try:

#print(darknet)

summary(darknet,(3,256,256))

print(darknet(data))

except Exception as e:

print(e)

代码的输出如下所示:

Total params: 26,627,434

Trainable params: 26,627,434

Non-trainable params: 0

----------------------------------------------------------------

Input size (MB): 0.75

Forward/backward pass size (MB): 553.51

Params size (MB): 101.58

Estimated Total Size (MB): 655.83

----------------------------------------------------------------

tensor([[ 0.1690, 0.0798, 0.1836, 0.2414, 0.3855, 0.2437, -0.1422, -0.1855,

0.1758, -0.2452]], device='cuda:0')

注意:输出中存在框架结构内容,这里没有将其写在博客中

4. 结论

CSP-Darknet53的代码结构结合着对应的代码实现一起看,可以有效帮助大家理解关于原理部分的内容。希望可以帮助到大家!!!

另外,关于代码中存在的一些小的部分可能会在后面进行介绍。

文章出处登录后可见!

已经登录?立即刷新