目录

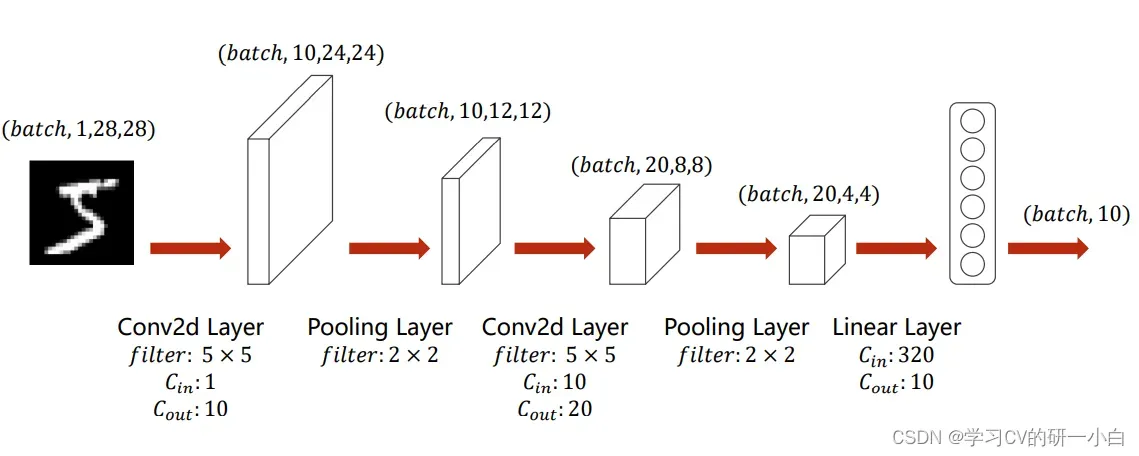

选择下图结构的卷积神经网络来进行训练:

步骤:

- 选择 5 x 5 的卷积核,输入通道为 1,输出通道为 10:此时图像矩阵经过 5 x 5 的卷积核后会小两圈,也就是4个数位,变成 24 x 24,输出通道为10;

- 选择 2 x 2 的最大池化层:此时图像大小缩短一半,变成 12 x 12,通道数不变;

- 再次经过 5 x 5 的卷积核,输入通道为 10,输出通道为 20:此时图像再小两圈,变成 8 *8,输出通道为20;

- 再次经过 2 x 2 的最大池化层:此时图像大小缩短一半,变成 4 x 4,通道数不变;

- 最后将图像整型变换成向量,输入到全连接层中:输入一共有 4 x 4 x 20 = 320 个元素,输出为 10.

具体代码如下:

1 准备数据集

# 准备数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)2 建立模型

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = torch.nn.Conv2d(10, 20, kernel_size=5)

self.pooling = torch.nn.MaxPool2d(2)

self.fc = torch.nn.Linear(320, 10)

def forward(self, x):

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x = F.relu(self.pooling(self.conv2(x)))

x = x.view(batch_size, -1)

x = self.fc(x)

return x

model = Net()

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model.to(device)3 构造损失函数+优化器

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)4 训练+测试

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

inputs,target=inputs.to(device),target.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d,%.5d] loss:%.3f' % (epoch + 1, batch_idx + 1, running_loss / 2000))

running_loss = 0.0

def test():

correct=0

total=0

with torch.no_grad():

for data in test_loader:

inputs,target=data

inputs,target=inputs.to(device),target.to(device)

outputs=model(inputs)

_,predicted=torch.max(outputs.data,dim=1)

total+=target.size(0)

correct+=(predicted==target).sum().item()

print('Accuracy on test set:%d %% [%d%d]' %(100*correct/total,correct,total))

if __name__ =='__main__':

for epoch in range(10):

train(epoch)

test()5 完整代码+运行结果

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

# 准备数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,), (0.3081,))

])

train_dataset = datasets.MNIST(root='../dataset/mnist/',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='../dataset/mnist',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = torch.nn.Conv2d(10, 20, kernel_size=5)

self.pooling = torch.nn.MaxPool2d(2)

self.fc = torch.nn.Linear(320, 10)

def forward(self, x):

batch_size = x.size(0)

x = F.relu(self.pooling(self.conv1(x)))

x = F.relu(self.pooling(self.conv2(x)))

x = x.view(batch_size, -1)

x = self.fc(x)

return x

model = Net()

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

model.to(device)

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader, 0):

inputs, target = data

inputs,target=inputs.to(device),target.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d,%.5d] loss:%.3f' % (epoch + 1, batch_idx + 1, running_loss / 2000))

running_loss = 0.0

def test():

correct=0

total=0

with torch.no_grad():

for data in test_loader:

inputs,target=data

inputs,target=inputs.to(device),target.to(device)

outputs=model(inputs)

_,predicted=torch.max(outputs.data,dim=1)

total+=target.size(0)

correct+=(predicted==target).sum().item()

print('Accuracy on test set:%d %% [%d%d]' %(100*correct/total,correct,total))

if __name__ =='__main__':

for epoch in range(10):

train(epoch)

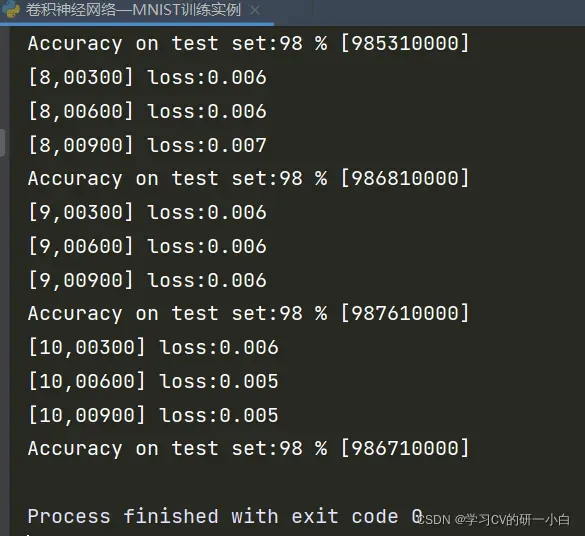

test()运行结果如下:

版权声明:本文为博主学习CV的研一小白原创文章,版权归属原作者,如果侵权,请联系我们删除!

原文链接:https://blog.csdn.net/Learning_AI/article/details/122641455