Darknet53原理

Darknet53是一个卷积神经网络模型,在2018年由Joseph Redmon在论文”YOLOv3: An Incremental Improvement”中提出,用于目标检测和分类任务。它是YOLOv3的核心网络模型,其设计思路是通过堆叠多个卷积和残差连接层来提高特征提取的效果。

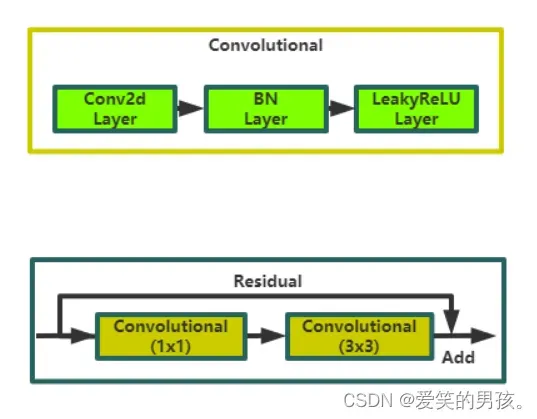

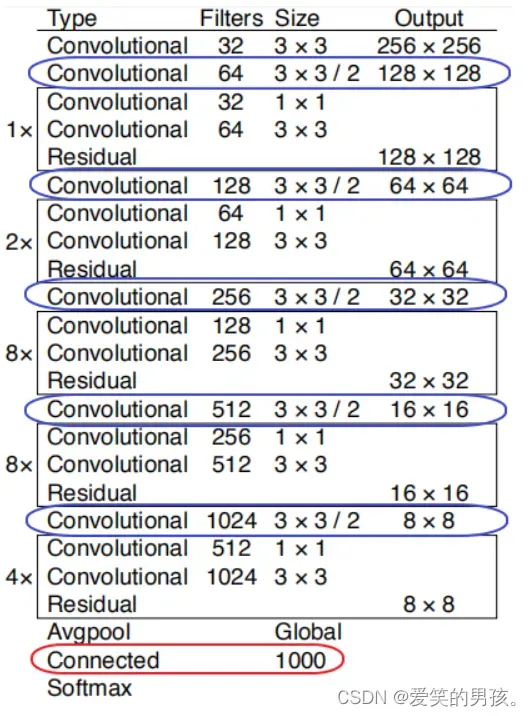

Darknet53包含53个卷积层和5个max-pooling层组成。Darknet53的结构可以被划分为三组:前段主要由卷积层和max-pooling层组成,中段主要由残差块组成,后段主要由全局平均池化层和全连接层组成。

具体来说,前段的7个卷积层每层都是卷积核大小为3×3,步长为1×1的卷积,接着是5个max-pooling层。这部分的目的是将图像的空间信息不断缩小并增加深度。

接下来是中段的残差块,由于YOLOv3的作者发现,在上述设计下,YOLOv3的学习非常的慢,于是便提出了一种名为”残差块(Residual Block)”的结构。残差块类似于一个短路,它可以让神经网络学习到“残差”,即当前输入和期望输出之间的差异。它使用残差结构来加速收敛、减少梯度消失的问题,以及提高精度。这种结构使得网络能够更好地学习输入图像中的特征,并且增强了模型的表达能力。网络框架图如下:

Darknet53的输入为416×416像素大小的RGB图像,经过一系列卷积操作后,输出为13x13x1024的张量。这个张量可以传递给YOLOv3中的检测头,用于检测图像中的物体。

Darknet53网络框架的特点包括:

-

模型较浅:相比其他深度神经网络框架,Darknet53只有53层,因此训练速度快,性能高。

-

网络深度可扩展:使用残差结构,可以轻松地增加更多的卷积层来提高网络的性能。

-

参数量小:Darknet53的参数量比其他网络少很多,尤其是与ResNet等模型相比,这使得模型更快,更容易训练和优化。

-

高效推理:Darknet53使用低精度计算和并行计算技术,使得模型的推理速度很快,并且可以在低功耗设备上执行。

-

适用于大量类别的物体分类:Darknet53的优势在于学习图像特征,并可以高效地解决大量类别的物体分类问题。

总的来说,Darknet53是一个非常有效的卷积神经网络,具有较高的准确性和效率,成为了现代计算机视觉任务中广泛使用的骨干网络之一,并且它已被广泛应用于计算机视觉领域的各种任务,包括图像分类、目标检测、人脸识别等。

Darknet53源码(torch版)

数据集运行代码时自动下载,如果网络比较慢,可以自行点击我分享的链接下载cifar数据集。

链接:百度网盘

提取码:kd9a

此代码是使用的GPU运行的,如果没有GPU导致运行失败,就把代码中的device、.to(device)删除,使用默认CPU运行。

如果GPU显存小导致运行报错,就将主函数main()里面的batch_size调小即可。

import torch

import torch.nn as nn

from torch.nn import modules

from torch.utils.data import DataLoader

from torchvision.datasets import CIFAR10

from torchvision.transforms import transforms

from torch.autograd import Variable

class ConvBnLeaky(modules.Module):

def __init__(self,in_it,out_it,kernels,padding = 0,strides = 1):

super(ConvBnLeaky, self).__init__()

self.convs = nn.Sequential(

nn.Conv2d(in_it,out_it,kernels,padding=padding,stride=strides),

nn.BatchNorm2d(out_it),

nn.LeakyReLU(0.1,True)

)

def forward(self,x):

x = self.convs(x)

return x

pass

class Resnet_Block(modules.Module):

def __init__(self,ch,num_block = 1):

super(Resnet_Block, self).__init__()

self.module_list = modules.ModuleList()

for _ in range(num_block):

resblock = nn.Sequential(

ConvBnLeaky(ch,ch // 2,1),

ConvBnLeaky(ch // 2,ch,kernels=3,padding=1)

)

self.module_list.append(resblock)

def forward(self,x):

for i in self.module_list:

x = i(x) + x

return x

class Darknet_53(modules.Module):

def __init__(self,num_classes = 10):

super(Darknet_53, self).__init__()

self.later_1 = nn.Sequential(

ConvBnLeaky(3,32,3,padding=1),

ConvBnLeaky(32,64,3,padding=1,strides=2),

Resnet_Block(64,1)

)

self.later_2 = nn.Sequential(

ConvBnLeaky(64, 128, 3, padding=1,strides=2),

Resnet_Block(128, 2)

)

self.later_3 = nn.Sequential(

ConvBnLeaky(128, 256, 3, padding=1, strides=2),

Resnet_Block(256, 8)

)

self.later_4 = nn.Sequential(

ConvBnLeaky(256, 512, 3, padding=1, strides=2),

Resnet_Block(512, 8)

)

self.later_5 = nn.Sequential(

ConvBnLeaky(512, 1024, 3, padding=1, strides=2),

Resnet_Block(1024, 4)

)

self.pool = nn.AdaptiveAvgPool2d((1,1))

self.fc = nn.Linear(1024,num_classes)

def forward(self,x):

x = self.later_1(x)

x = self.later_2(x)

x = self.later_3(x)

x = self.later_4(x)

x = self.later_5(x)

x = self.pool(x)

x = torch.squeeze(x)

x = self.fc(x)

return x

def main():

Root_file = 'cifar'

train_data = CIFAR10(Root_file,train=True,transform = transforms.ToTensor())

data = DataLoader(train_data,batch_size=64,shuffle=True)

device = torch.device('cuda')

net = Darknet_53().to(device)

print(net)

Cross = nn.CrossEntropyLoss().to(device)

optimizer = torch.optim.Adam(net.parameters(),0.001)

for epoch in range(10):

for img,label in data:

img = Variable(img).to(device)

label = Variable(label).to(device)

output = net.forward(img)

loss = Cross(output,label)

loss.backward()

optimizer.zero_grad()

optimizer.step()

pre = torch.argmax(output,1)

num = (pre == label).sum().item()

acc = num / img.shape[0]

loss_val = loss.item()

print("epoch:",epoch)

print("acc:",acc)

print("loss:",loss_val)

pass

if __name__ == '__main__':

main()

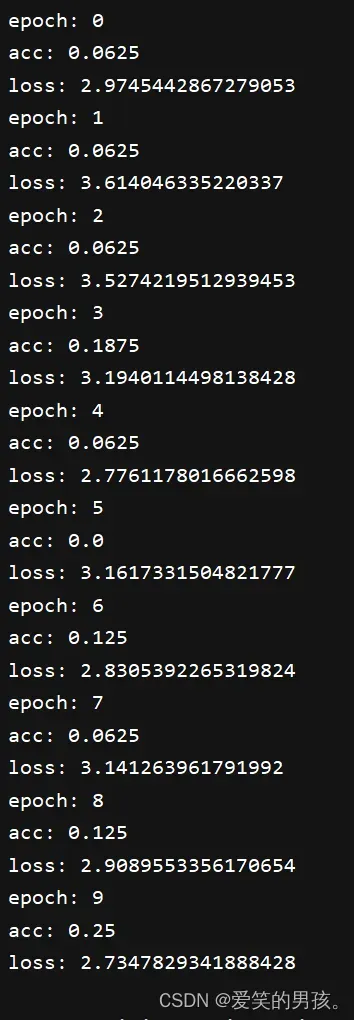

训练10个epoch的效果

效果图

框架图

Darknet_53(

(later_1): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(3, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(32, 64, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(2): Resnet_Block(

(module_list): ModuleList(

(0): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(64, 32, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(32, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

)

)

)

(later_2): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(64, 128, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): Resnet_Block(

(module_list): ModuleList(

(0): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 64, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(64, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(1): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 64, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(64, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

)

)

)

(later_3): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): Resnet_Block(

(module_list): ModuleList(

(0): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(1): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(2): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(3): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(4): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(5): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(6): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(7): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

)

)

)

(later_4): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): Resnet_Block(

(module_list): ModuleList(

(0): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(1): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(2): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(3): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(4): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(5): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(6): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(7): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 256, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(256, 512, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

)

)

)

(later_5): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 1024, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1))

(1): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): Resnet_Block(

(module_list): ModuleList(

(0): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(1): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(2): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

(3): Sequential(

(0): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(1024, 512, kernel_size=(1, 1), stride=(1, 1))

(1): BatchNorm2d(512, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

(1): ConvBnLeaky(

(convs): Sequential(

(0): Conv2d(512, 1024, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

(1): BatchNorm2d(1024, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(2): LeakyReLU(negative_slope=0.1, inplace=True)

)

)

)

)

)

)

(pool): AdaptiveAvgPool2d(output_size=(1, 1))

(fc): Linear(in_features=1024, out_features=10, bias=True)

)

文章出处登录后可见!