有关条件GAN(cgan)的相关原理,可以参考:

GAN系列之CGAN原理简介以及pytorch项目代码实现

其他类型的GAN原理介绍以及应用,可以查看我的GANs专栏

1.数据集介绍,加载数据

依旧使用到的是我们的老朋友—–MNIST手写数字数据集, 本文不再详细做介绍

相关数据集介绍可以参考:深度学习入门–MNIST数据集及创建自己的手写数字数据集

传统GAN生成手写数字参考:入门GAN实战—生成MNIST手写数据集代码实现pytorch

DCGAN生成手写数字参考:Pytorch 使用DCGAN生成MNIST手写数字 入门级教程

# 独热编码

def one_hot(x, class_count=10):

return torch.eye(class_count)[x, :]

transform =transforms.Compose([transforms.ToTensor(),

transforms.Normalize(0.5, 0.5)])

# 这个数据集其实包含两部分,第一部分是数据,第二部分是标签 print(dataset[0])

#这个第二部分就是我们所需要的condition,这个condition是数值类型,1就是1,2就是2。

#作为输入的condition并不是很合适,一种处理方法就是作为一种向量输入,就是独热编码化。

#比如说现在有10个类别,10个类别将被独热编码为长度为10的tensor,使用这个tensor作为我们的condition是比较合适的

dataset = torchvision.datasets.MNIST('data',

train=True,

transform=transform,

target_transform=one_hot,

download=False)

dataloader = data.DataLoader(dataset, batch_size=64, shuffle=True)这里是作者使用one-hot编码的一个小技巧

One-Hot编码,又称为一位有效编码,主要是采用位状态寄存器来对个状态进行编码,每个状态都由他独立的寄存器位,并且在任意时候只有一位有效。独热编码 是利用0和1表示一些参数,使用N位状态寄存器来对N个状态进行编码。

例如:参考数字手写体识别中:如数字字体识别0~9中,6的独热编码为

0000001000

二、定义生成器

# 定义生成器

class Generator(nn.Module):

def __init__(self):

super(Generator, self).__init__()

self.linear1 = nn.Linear(10, 128 * 7 * 7)

self.bn1 = nn.BatchNorm1d(128 * 7 * 7)

self.linear2 = nn.Linear(100, 128 * 7 * 7)

self.bn2 = nn.BatchNorm1d(128 * 7 * 7)

self.deconv1 = nn.ConvTranspose2d(256, 128,

kernel_size=(3, 3),

padding=1)

self.bn3 = nn.BatchNorm2d(128)

self.deconv2 = nn.ConvTranspose2d(128, 64,

kernel_size=(4, 4),

stride=2,

padding=1)

self.bn4 = nn.BatchNorm2d(64)

self.deconv3 = nn.ConvTranspose2d(64, 1,

kernel_size=(4, 4),

stride=2,

padding=1)

def forward(self, x1, x2): # X1代表label X2代表image

x1 = F.relu(self.linear1(x1))

x1 = self.bn1(x1)

x1 = x1.view(-1, 128, 7, 7)

x2 = F.relu(self.linear2(x2))

x2 = self.bn2(x2)

x2 = x2.view(-1, 128, 7, 7)

x = torch.cat([x1, x2], axis=1)

x = F.relu(self.deconv1(x))

x = self.bn3(x)

x = F.relu(self.deconv2(x))

x = self.bn4(x)

x = torch.tanh(self.deconv3(x))

return x3. 定义判别器

# 定义判别器

# input:1,28,28的图片以及长度为10的condition

class Discriminator(nn.Module):

def __init__(self):

super(Discriminator, self).__init__()

self.linear = nn.Linear(10, 1*28*28)

self.conv1 = nn.Conv2d(2, 64, kernel_size=3, stride=2)

self.conv2 = nn.Conv2d(64, 128, kernel_size=3, stride=2)

self.bn = nn.BatchNorm2d(128)

self.fc = nn.Linear(128*6*6, 1) # 输出一个概率值

def forward(self, x1, x2):

x1 =F.leaky_relu(self.linear(x1))

x1 = x1.view(-1, 1, 28, 28)

x = torch.cat([x1, x2], axis=1)

x = F.dropout2d(F.leaky_relu(self.conv1(x)))

x = F.dropout2d(F.leaky_relu(self.conv2(x)))

x = self.bn(x)

x = x.view(-1, 128*6*6)

x = torch.sigmoid(self.fc(x))

return x

四、完整代码展示

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import numpy as np

import matplotlib.pyplot as plt

import torchvision

from torchvision import transforms

from torch.utils import data

import os

import glob

from PIL import Image

# 独热编码

# 输入x代表默认的torchvision返回的类比值,class_count类别值为10

def one_hot(x, class_count=10):

return torch.eye(class_count)[x, :] # 切片选取,第一维选取第x个,第二维全要

transform =transforms.Compose([transforms.ToTensor(),

transforms.Normalize(0.5, 0.5)])

dataset = torchvision.datasets.MNIST('data',

train=True,

transform=transform,

target_transform=one_hot,

download=False)

dataloader = data.DataLoader(dataset, batch_size=64, shuffle=True)

# 定义生成器

class Generator(nn.Module):

def __init__(self):

super(Generator, self).__init__()

self.linear1 = nn.Linear(10, 128 * 7 * 7)

self.bn1 = nn.BatchNorm1d(128 * 7 * 7)

self.linear2 = nn.Linear(100, 128 * 7 * 7)

self.bn2 = nn.BatchNorm1d(128 * 7 * 7)

self.deconv1 = nn.ConvTranspose2d(256, 128,

kernel_size=(3, 3),

padding=1)

self.bn3 = nn.BatchNorm2d(128)

self.deconv2 = nn.ConvTranspose2d(128, 64,

kernel_size=(4, 4),

stride=2,

padding=1)

self.bn4 = nn.BatchNorm2d(64)

self.deconv3 = nn.ConvTranspose2d(64, 1,

kernel_size=(4, 4),

stride=2,

padding=1)

def forward(self, x1, x2):

x1 = F.relu(self.linear1(x1))

x1 = self.bn1(x1)

x1 = x1.view(-1, 128, 7, 7)

x2 = F.relu(self.linear2(x2))

x2 = self.bn2(x2)

x2 = x2.view(-1, 128, 7, 7)

x = torch.cat([x1, x2], axis=1)

x = F.relu(self.deconv1(x))

x = self.bn3(x)

x = F.relu(self.deconv2(x))

x = self.bn4(x)

x = torch.tanh(self.deconv3(x))

return x

# 定义判别器

# input:1,28,28的图片以及长度为10的condition

class Discriminator(nn.Module):

def __init__(self):

super(Discriminator, self).__init__()

self.linear = nn.Linear(10, 1*28*28)

self.conv1 = nn.Conv2d(2, 64, kernel_size=3, stride=2)

self.conv2 = nn.Conv2d(64, 128, kernel_size=3, stride=2)

self.bn = nn.BatchNorm2d(128)

self.fc = nn.Linear(128*6*6, 1) # 输出一个概率值

def forward(self, x1, x2):

x1 =F.leaky_relu(self.linear(x1))

x1 = x1.view(-1, 1, 28, 28)

x = torch.cat([x1, x2], axis=1)

x = F.dropout2d(F.leaky_relu(self.conv1(x)))

x = F.dropout2d(F.leaky_relu(self.conv2(x)))

x = self.bn(x)

x = x.view(-1, 128*6*6)

x = torch.sigmoid(self.fc(x))

return x

# 初始化模型

device = 'cuda' if torch.cuda.is_available() else 'cpu'

gen = Generator().to(device)

dis = Discriminator().to(device)

# 损失计算函数

loss_function = torch.nn.BCELoss()

# 定义优化器

d_optim = torch.optim.Adam(dis.parameters(), lr=1e-5)

g_optim = torch.optim.Adam(gen.parameters(), lr=1e-4)

# 定义可视化函数

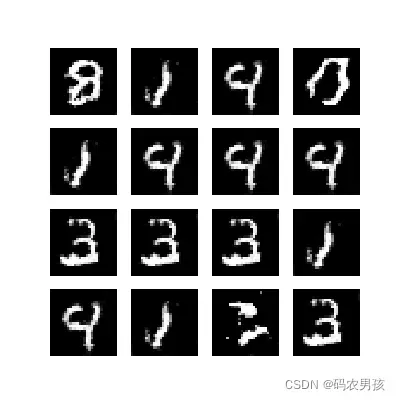

def generate_and_save_images(model, epoch, label_input, noise_input):

predictions = np.squeeze(model(label_input, noise_input).cpu().numpy())

fig = plt.figure(figsize=(4, 4))

for i in range(predictions.shape[0]):

plt.subplot(4, 4, i + 1)

plt.imshow((predictions[i] + 1) / 2, cmap='gray')

plt.axis("off")

plt.show()

noise_seed = torch.randn(16, 100, device=device)

label_seed = torch.randint(0, 10, size=(16,))

label_seed_onehot = one_hot(label_seed).to(device)

print(label_seed)

# print(label_seed_onehot)

# 开始训练

D_loss = []

G_loss = []

# 训练循环

for epoch in range(150):

d_epoch_loss = 0

g_epoch_loss = 0

count = len(dataloader.dataset)

# 对全部的数据集做一次迭代

for step, (img, label) in enumerate(dataloader):

img = img.to(device)

label = label.to(device)

size = img.shape[0]

random_noise = torch.randn(size, 100, device=device)

d_optim.zero_grad()

real_output = dis(label, img)

d_real_loss = loss_function(real_output,

torch.ones_like(real_output, device=device)

)

d_real_loss.backward() #求解梯度

# 得到判别器在生成图像上的损失

gen_img = gen(label,random_noise)

fake_output = dis(label, gen_img.detach()) # 判别器输入生成的图片,f_o是对生成图片的预测结果

d_fake_loss = loss_function(fake_output,

torch.zeros_like(fake_output, device=device))

d_fake_loss.backward()

d_loss = d_real_loss + d_fake_loss

d_optim.step() # 优化

# 得到生成器的损失

g_optim.zero_grad()

fake_output = dis(label, gen_img)

g_loss = loss_function(fake_output,

torch.ones_like(fake_output, device=device))

g_loss.backward()

g_optim.step()

with torch.no_grad():

d_epoch_loss += d_loss.item()

g_epoch_loss += g_loss.item()

with torch.no_grad():

d_epoch_loss /= count

g_epoch_loss /= count

D_loss.append(d_epoch_loss)

G_loss.append(g_epoch_loss)

if epoch % 10 == 0:

print('Epoch:', epoch)

generate_and_save_images(gen, epoch, label_seed_onehot, noise_seed)5.结果展示

随机生成的条件

根据这个条件生成的手写数字

文章出处登录后可见!

已经登录?立即刷新