基础实战——FashionMNIST时装分类

为了把PyTorch入门知识串起来,现在通过一个基础的实战案例了解。

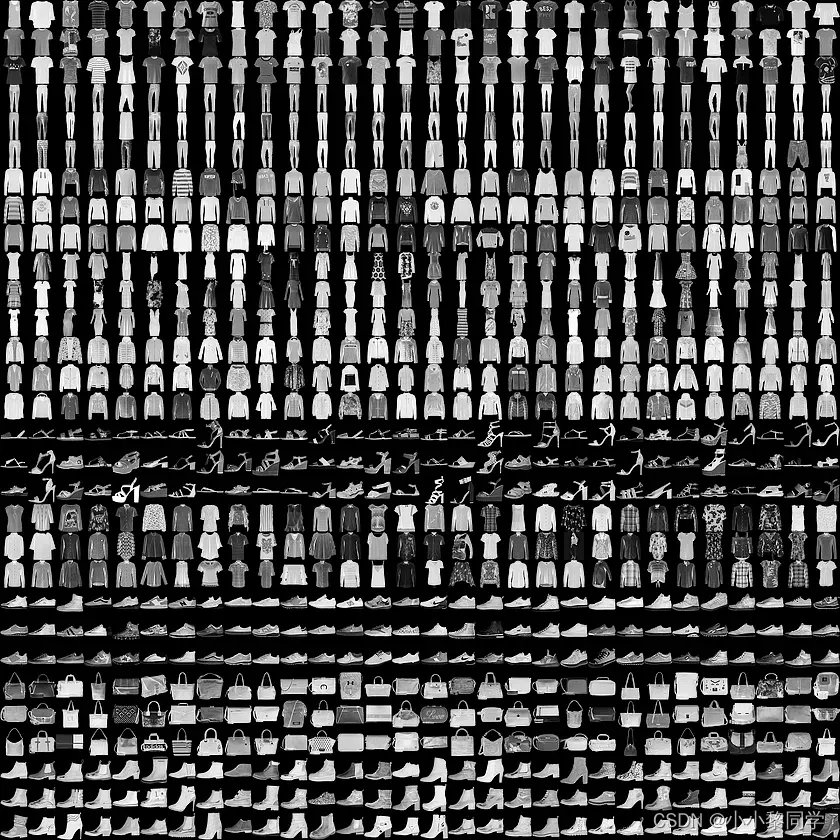

我们这里的任务是对10类的“时装”图像进行分类,使用FashionMNIST数据集。上图了FashionMNIST 中数据的样例训练图,其中每张小图

训练一个样本图像单通道黑白图像,大小为28*28pixel,分属10个类别。

首先进口必需的包

import os

import numpy as np

import pandas as pd

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import Dataset, DataLoader

配置训练环境和超参数

# 配置GPU,这里有两种方式

## 方案一:使用os.environ

#os.environ['CUDA_VISIBLE_DEVICES'] = '0'

# 方案二:使用“device”,后续对要使用GPU的变量用.to(device)即可

device = torch.device("cuda:1" if torch.cuda.is_available() else "cpu")

## 配置其他超参数,如batch_size, num_workers, learning rate, 以及总的epochs

batch_size = 256

num_workers = 0 # 对于Windows用户,这里应设置为0,否则会出现多线程错误

lr = 1e-4

epochs = 20

数据读入和加载

这里同时展示两种方式:

下载并使用PyTorch提供的内置数据集 从网站下载以csv格式存储的数据,读入并转成预期的格式 第一种数据读入方式只适用于常见的数据集,如MNIST,CIFAR10等,PyTorch官方提供了数据下载。这种方式往往适用于快速测试方法(比如测试下某个idea在MNIST数据集上是否有效) 第二种数据读入方式需要自己构建Dataset,这对于PyTorch应用于自己的工作中十分重要同时,还需要对数据进行必要的变换,比如说需要将图片统一为一致的大小,以便后续能够输入网络训练;需要将数据格式转为Tensor类,等等。

对于如何构建Dataset,请参考初识Dataset与DataLoader-pytorch

上面这些变换可以很方便地借助torchvision包来完成,这是PyTorch官方用于图像处理的工具库,上面提到的使用内置数据集的方式也要用到。PyTorch的一大方便之处就在于它是一整套“生态”,有着官方和第三方各个领域的支持。

# 首先设置数据变换

from torchvision import transforms

image_size = 28

data_transform = transforms.Compose([

transforms.ToPILImage(), # 这一步取决于后续的数据读取方式,如果使用内置数据集则不需要

transforms.Resize(image_size),

transforms.ToTensor()

])

## 读取方式一:使用torchvision自带数据集,下载可能需要一段时间

from torchvision import datasets

train_data = datasets.FashionMNIST(root='./', train=True, download=True, transform=data_transform)

test_data = datasets.FashionMNIST(root='./', train=False, download=True, transform=data_transform)

## 读取方式二:读入csv格式的数据,自行构建Dataset类

# csv数据下载链接:https://www.kaggle.com/zalando-research/fashionmnist

class FMDataset(Dataset):

def __init__(self, df, transform=None):

self.df = df

self.transform = transform

self.images = df.iloc[:,1:].values.astype(np.uint8)

self.labels = df.iloc[:, 0].values

def __len__(self):

return len(self.images)

def __getitem__(self, idx):

image = self.images[idx].reshape(28,28,1)

label = int(self.labels[idx])

if self.transform is not None:

image = self.transform(image)

else:

image = torch.tensor(image/255., dtype=torch.float)

label = torch.tensor(label, dtype=torch.long)

return image, label

train_df = pd.read_csv("./FashionMNIST/fashion-mnist_train.csv")

test_df = pd.read_csv("./FashionMNIST/fashion-mnist_test.csv")

train_data = FMDataset(train_df, data_transform)

test_data = FMDataset(test_df, data_transform)

在构建训练和测试数据集完成后,需要定义DataLoader类,以便在训练和测试时加载数据。

train_loader = DataLoader(train_data, batch_size=batch_size, shuffle=True, num_workers=num_workers, drop_last=True)

test_loader = DataLoader(test_data, batch_size=batch_size, shuffle=False, num_workers=num_workers)

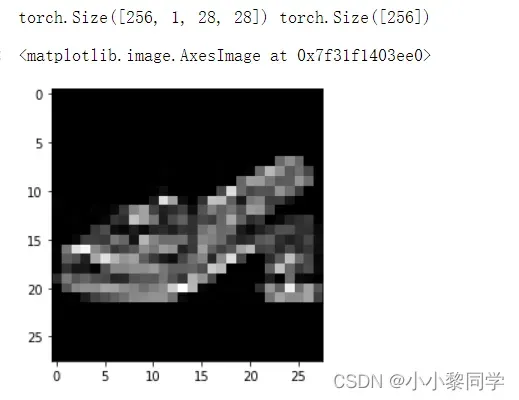

读入后,我们可以做一些数据可视化操作,主要是验证我们读入的数据是否正确。

import matplotlib.pyplot as plt

image, label = next(iter(train_loader))

print(image.shape, label.shape)

plt.imshow(image[0][0], cmap="gray")

这里的iter()相当于for循环,把train_loader中的批量数量遍历(不是对批量大小进行遍历),next()取第一个批量,然后image[0][0]的第一个0代表这一个批量的第一个数据,第二个0代表通道。

输出结果:

CNNnet

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv = nn.Sequential(

nn.Conv2d(1, 32, 5),

nn.ReLU(),

nn.MaxPool2d(2, stride=2),

nn.Dropout(0.3),

nn.Conv2d(32, 64, 5),

nn.ReLU(),

nn.MaxPool2d(2, stride=2),

nn.Dropout(0.3)

)

self.fc = nn.Sequential(

nn.Linear(64*4*4, 512),

nn.ReLU(),

nn.Linear(512, 10)

)

def forward(self, x):

x = self.conv(x)

x = x.view(-1, 64*4*4)

x = self.fc(x)

# x = nn.functional.normalize(x)

return x

model = Net()

model = model.cuda()#把模型搬到GPU

设定损失函数

使用torch.nn模块自带的CrossEntropy损失。PyTorch会自动把整数型的label转为one-hot型,用于计算CE loss。这里需要确保label是从0开始的,同时模型不加softmax层(使用logits计算),这也说明了PyTorch训练中各个部分不是独立的,需要通盘考虑。

criterion = nn.CrossEntropyLoss()

# criterion = nn.CrossEntropyLoss(weight=[1,1,1,1,3,1,1,1,1,1])

设定优化器

optimizer = optim.Adam(model.parameters(), lr=0.001)#使用Adam优化器

测试和(验证)

封装训练成函数,方便

关注这些的主要区别:

**·**状态设置

**·**是否需要初始化优化器

**·**是否需要将损失传回网络

**·**是否需要每步更新优化器

此外,对于测试或过程验证,可以计算出分类准确率

def train(epoch):

model.train()

train_loss = 0

for data, label in train_loader:

data, label = data.cuda(), label.cuda()

optimizer.zero_grad()

output = model(data)

loss = criterion(output, label)

loss.backward()

optimizer.step()

train_loss += loss.item()*data.size(0)

train_loss = train_loss/len(train_loader.dataset)

print('Epoch: {} \tTraining Loss: {:.6f}'.format(epoch, train_loss))

def val(epoch):

model.eval()

val_loss = 0

gt_labels = []

pred_labels = []

with torch.no_grad():

for data, label in test_loader:

data, label = data.cuda(), label.cuda()

output = model(data)

preds = torch.argmax(output, 1)

gt_labels.append(label.cpu().data.numpy())

pred_labels.append(preds.cpu().data.numpy())

loss = criterion(output, label)

val_loss += loss.item()*data.size(0)

val_loss = val_loss/len(test_loader.dataset)

gt_labels, pred_labels = np.concatenate(gt_labels), np.concatenate(pred_labels)

acc = np.sum(gt_labels==pred_labels)/len(pred_labels)

print('Epoch: {} \tValidation Loss: {:.6f}, Accuracy: {:6f}'.format(epoch, val_loss, acc))

for epoch in range(1, epochs+1):

train(epoch)

val(epoch)

输出结果:

Epoch: 1 Training Loss: 0.672554

Epoch: 1 Validation Loss: 0.473798, Accuracy: 0.826200

Epoch: 2 Training Loss: 0.424969

Epoch: 2 Validation Loss: 0.365793, Accuracy: 0.868600

Epoch: 3 Training Loss: 0.361524

Epoch: 3 Validation Loss: 0.357469, Accuracy: 0.873200

Epoch: 4 Training Loss: 0.328624

Epoch: 4 Validation Loss: 0.316382, Accuracy: 0.886100

Epoch: 5 Training Loss: 0.305737

Epoch: 5 Validation Loss: 0.304265, Accuracy: 0.889900

Epoch: 6 Training Loss: 0.289633

Epoch: 6 Validation Loss: 0.284644, Accuracy: 0.893800

Epoch: 7 Training Loss: 0.275116

Epoch: 7 Validation Loss: 0.279674, Accuracy: 0.897900

Epoch: 8 Training Loss: 0.262604

Epoch: 8 Validation Loss: 0.260141, Accuracy: 0.904300

Epoch: 9 Training Loss: 0.251661

Epoch: 9 Validation Loss: 0.254229, Accuracy: 0.904900

Epoch: 10 Training Loss: 0.241646

Epoch: 10 Validation Loss: 0.244286, Accuracy: 0.909300

Epoch: 11 Training Loss: 0.235099

Epoch: 11 Validation Loss: 0.244644, Accuracy: 0.911100

Epoch: 12 Training Loss: 0.226326

Epoch: 12 Validation Loss: 0.244030, Accuracy: 0.908000

Epoch: 13 Training Loss: 0.221009

Epoch: 13 Validation Loss: 0.237542, Accuracy: 0.912900

Epoch: 14 Training Loss: 0.208733

Epoch: 14 Validation Loss: 0.237512, Accuracy: 0.910000

Epoch: 15 Training Loss: 0.205056

Epoch: 15 Validation Loss: 0.232690, Accuracy: 0.914900

Epoch: 16 Training Loss: 0.200654

Epoch: 16 Validation Loss: 0.229157, Accuracy: 0.914200

Epoch: 17 Training Loss: 0.194465

Epoch: 17 Validation Loss: 0.228105, Accuracy: 0.914200

Epoch: 18 Training Loss: 0.185152

Epoch: 18 Validation Loss: 0.230176, Accuracy: 0.916400

Epoch: 19 Training Loss: 0.183666

Epoch: 19 Validation Loss: 0.222372, Accuracy: 0.919900

Epoch: 20 Training Loss: 0.174856

Epoch: 20 Validation Loss: 0.227230, Accuracy: 0.916900

模型保存

save_path = "./FahionModel.pkl"

torch.save(model, save_path)

文章出处登录后可见!