一、tensor.mean()定义:

input = torch.randn(4, 4)

torch.mean(a, 0) 等同于 input.mean(0)方法参考:torch.mean(input, dim, keepdim=False, *, dtype=None, out=None) → Tensor

Parameters:

- input (Tensor) – the input tensor.

- dim (int or tuple of python:ints) – the dimension or dimensions to reduce.

- keepdim (bool) – whether the output tensor has

dimretained or not.

作用:在指定的dim上,对每一行(列、纵)取均值。

Returns the mean value of each row of the input tensor in the given dimension dim. If dim is a list of dimensions, reduce over all of them.

1.1 简单例子,实现对tensor不同维度求均值。

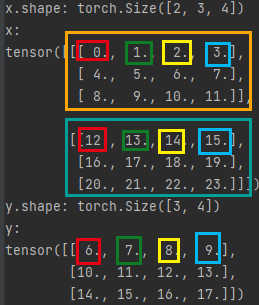

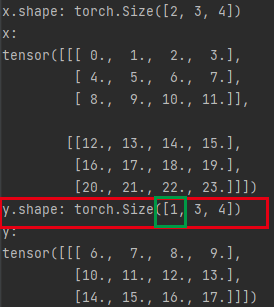

比如如下数组(C,H,W)为[2,3,4],在C维度上,求每纵(Channel)的均值。

import torch

x = torch.arange(24).view(2, 3, 4).float()

y = x.mean(0)

print("x.shape:", x.shape)

print("x:")

print(x)

print("y.shape:", y.shape)

print("y:")

print(y)输出结果:

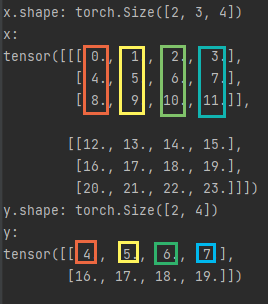

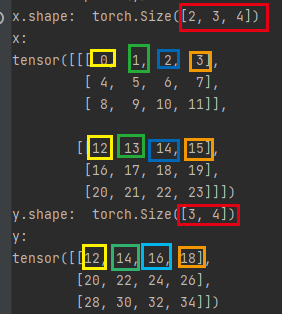

比如如下数组(C,H,W)为[2,3,4],在H维度上,求每列(Height)的均值。

代码:

import torch

x = torch.arange(24).view(2, 3, 4).float()

y = x.mean(1)

print("x.shape:", x.shape)

print("x:")

print(x)

print("y.shape:", y.shape)

print("y:")

print(y)输出:

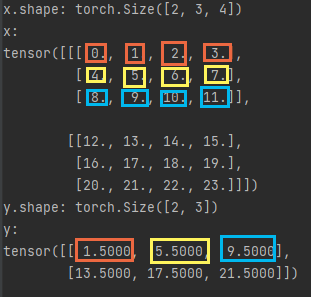

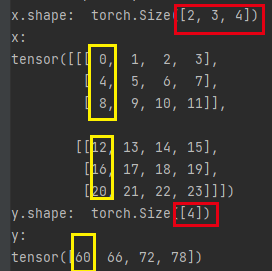

比如如下数组(C,H,W)为[2,3,4],在W维度上,求每行(Width)的均值。

代码:

import torch

x = torch.arange(24).view(2, 3, 4).float()

y = x.mean(2)

print("x.shape:", x.shape)

print("x:")

print(x)

print("y.shape:", y.shape)

print("y:")

print(y)

输出:

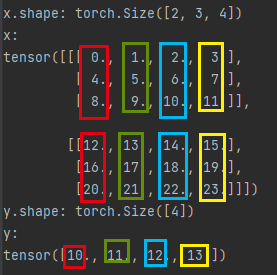

1.2 如果dim是一个list:y = x.mean([0,1])

代码例子:

import torch

x = torch.arange(24).view(2, 3, 4).float()

y = x.mean([0,1])

print("x.shape:", x.shape)

print("x:")

print(x)

print("y.shape:", y.shape)

print("y:")

print(y)结果输出:可以理解成先做dim=1,然后再做dim=0;

1.3 dim=负数,索引从负一开始,从左往右数。

y = x.mean(-3) 等同于 y = x.mean(0)

y = x.mean(-2) 等同于 y = x.mean(1)

y = x.mean(-1) 等同于 y = x.mean(2)

1.4 keepdim参数默认是False,如果是true,是什么结果呢?

If keepdim is True, the output tensor is of the same size as input except in the dimension(s) dim where it is of size 1. Otherwise, dim is squeezed (see torch.squeeze()), resulting in the output tensor having 1 (or len(dim)) fewer dimension(s).

从英语字面上:输出的tensor和输入的tensor,size一样,但是指定的dim值为1。

看例子:y = x.mean(0, keepdim = True)

import torch

x = torch.arange(24).view(2, 3, 4).float()

y = x.mean(0,keepdim = True)

print("x.shape:", x.shape)

print("x:")

print(x)

print("y.shape:", y.shape)

print("y:")

print(y)结果输出:如图所示,第0维度被保留,但置为1。

二、tensor.sum()定义:

方法参考:torch.sum(input, dim, keepdim=False, *, dtype=None) → Tensor

Parameters:

- input (Tensor) – the input tensor.

- dim (int or tuple of python:ints) – the dimension or dimensions to reduce.

- keepdim (bool) – whether the output tensor has

dimretained or not.

作用:在指定的dim上,对每一行(列、纵)求和。

Returns the sum of each row of the input tensor in the given dimension dim. If dim is a list of dimensions, reduce over all of them.

2.1 单个维度求和,tensor.sum(dim)

代码例子:

import torch

x = torch.arange(2* 3 * 4).view(2, 3, 4)

y = x.sum((1,0))

print("x.shape: ",x.shape)

print("x:")

print(x)

print("y.shape: ",y.shape)

print("y:")

print(y)结果输出:

2.2 多个维度求和,tensor.sum((dim1,dim2))

代码例子:y = x.sum((0,1)),其中参数必须为元组(0,1),否则报错。[0,0],序列也行。

import torch

x = torch.arange(2* 3 * 4).view(2, 3, 4)

y = x.sum((0,1))

print("x.shape: ",x.shape)

print("x:")

print(x)

print("y.shape: ",y.shape)

print("y:")

print(y)

x.shape: torch.Size([2, 3, 4])

x:

tensor([[[ 0, 1, 2, 3],

[ 4, 5, 6, 7],

[ 8, 9, 10, 11]],

[[12, 13, 14, 15],

[16, 17, 18, 19],

[20, 21, 22, 23]]])

y.shape: torch.Size([4])

y:

tensor([60, 66, 72, 78])

结果输出:

三、联合在一起用

代码例子:tensor.mean(2).sum(0)

import torch

import numpy as np

import torchvision.transforms as transforms

# 这里以上述创建的单数据为例子

data = np.array([

[[1, 1, 1], [1, 1, 1], [1, 1, 1], [1, 1, 1], [1, 1, 1]],

[[2, 2, 2], [2, 2, 2], [2, 2, 2], [2, 2, 2], [2, 2, 2]],

[[3, 3, 3], [3, 3, 3], [3, 3, 3], [3, 3, 3], [3, 3, 3]],

[[4, 4, 4], [4, 4, 4], [4, 4, 4], [4, 4, 4], [4, 4, 4]],

[[5, 5, 5], [5, 5, 5], [5, 5, 5], [5, 5, 5], [5, 5, 5]]

], dtype='uint8')

# 将数据转为C,W,H,并归一化到[0,1]

data = transforms.ToTensor()(data)

# 需要对数据进行扩维,增加batch维度

data = torch.unsqueeze(data, 0) # tensor([1, 3, 5, 5])

nb_samples = 0.

# 创建3维的空列表

channel_mean = torch.zeros(3) # tensor([0., 0., 0.])

channel_std = torch.zeros(3) # tensor([0., 0., 0.])

print(data.shape) # torch.Size([1, 3, 5, 5])

N, C, H, W = data.shape[:4] # shape是一个列表, N:1 C:3 H:5 W:5

data = data.view(N, C, -1) # 将w,h维度的数据展平,为batch,channel,data,然后对三个维度上的数分别求和和标准差

print(data.shape) # tensor([1, 3, 25])

# 展平后,w,h属于第二维度,对他们求平均,sum(0)为将同一纬度的数据累加

channel_mean += data.mean(2).sum(0)

# 展平后,w,h属于第二维度,对他们求标准差,sum(0)为将同一纬度的数据累加

channel_std += data.std(2).sum(0)

# 获取所有batch的数据,这里为1

nb_samples += N

# 获取同一batch的均值和标准差

channel_mean /= nb_samples

channel_std /= nb_samples

print(channel_mean, channel_std)

# tensor([0.0118, 0.0118, 0.0118]) tensor([0.0057, 0.0057, 0.0057])

文章出处登录后可见!